Abstract

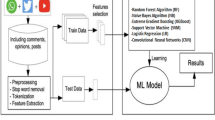

With the ever so growing internet, its influence over the society has deepened, and one such example is social media as even children are quite active on social media and can be easily influenced by it, social media can be a breeding ground for cyberbullying, which can lead to serious mental health consequences for victims. To counter such problems, content moderation systems can be an effective solution. They are designed to monitor and manage online content, with the goal of ensuring that it adheres to specific guidelines and standards. One such system based on natural language processing is described in the following paper, and various algorithms are compared to increase accuracy and precision. After testing the application, logistic regression yielded maximum precision and accuracy among the other algorithms.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Coutinho P, José R (2019) A risk management framework for user-generated content on public display systems. Adv Human-Comput Interaction 2019:1–18. https://doi.org/10.1155/2019/9769246

Köffer S, Riehle DM, Höhenberger S, Becker J (2018) Discussing the value of automatic hate speech detection in online debates. In: Proceedings of the Multikonferenz Wirtschaftsinformatik (MKWI 2018). Leuphana, Germany, pp 83–94

Koutamanis M, Vossen H, Valkenburg P (2015) Adolescents’ comments in social media: why do adolescents receive negative feedback and who is most at risk? Comput Hum Behav 53:486–494. https://doi.org/10.1016/j.chb.2015.07.016

Sun H, Ni W (2022) Design and application of an AI-based text content moderation system. Sci Program 2022:1–9. https://doi.org/10.1155/2022/2576535

Zaheri S, Leath J, Stroud D (2020) Toxic comment classification. SMU Data Sci Rev 3(1), Article 13

Androcec D (2020) Machine learning methods for toxic comment classification: a systematic review. Acta Universitatis Sapientiae, Informatica 12:205–216. https://doi.org/10.2478/ausi-2020-0012

Ravi P, Batta H, Yaseen G (2019). Toxic comment classification. Int J Trend Sci Res Dev 3:24–27. https://doi.org/10.31142/ijtsrd23464

Jigsaw. Data for Toxic Comment Classification Challenge. https://www.kaggle.com/c/jigsaw-toxic-comment-classification-challenge/data

Haralabopoulos G, Anagnostopoulos I, McAuley D (2020) Ensemble deep learning for multilabel binary classification of user-generated content. Algorithms 13:83. https://doi.org/10.3390/a13040083

Pavlopoulos J, Malakasiotis P, Androutsopoulos I (2017) Deeper attention to abusive user content moderation. In: Proceedings of the 2017 conference on empirical methods in natural language processing. Association for Computational Linguistics, Copenhagen, Denmark, pp 1125–1135. https://doi.org/10.18653/v1/D17-1117

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Gulati, G., Jha, H.A., Jain, R., Sharma, M., Chaudhary, V. (2024). Content Moderation System Using Machine Learning Techniques. In: Hassanien, A.E., Castillo, O., Anand, S., Jaiswal, A. (eds) International Conference on Innovative Computing and Communications. ICICC 2023. Lecture Notes in Networks and Systems, vol 731. Springer, Singapore. https://doi.org/10.1007/978-981-99-4071-4_58

Download citation

DOI: https://doi.org/10.1007/978-981-99-4071-4_58

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-4070-7

Online ISBN: 978-981-99-4071-4

eBook Packages: EngineeringEngineering (R0)