Abstract

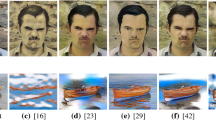

Existing Neural Style Transfer (NST) algorithms do not migrate styles well to a reasonable location where the output image can render the correct spatial structure of the object being painted. We propose a deep semantic matching-based multi-scale (DSM-MS) neural style transfer method, which can achieve the reasonable transfer of styles guided by the prior spatial segmentation and illumination information of input images. First, according to real drawing process, before an artist decides how to paint a stroke, he/she needs to observe and then understand subjects, segmenting space into different regions, objects and structures and analyzing the illumination conditions on each object. To simulate the two visual cognition processes, we define a deep semantic space (DSS) and propose a method for calculating DSSs using manual image segmentation, automatic illumination estimation and convolutional neural network (CNN). Second, we define a loss function, named deep semantic loss, which uses DSS to guide reasonable style transfer. Third, we propose a multi-scale optimization strategy for improving the efficiency of our method. Finally, we achieve an interdisciplinary application of our method for the first time–painterly rendering 3D scenes by neural style transfer. The experimental results show that our method can synthesize images in better original structures, with more reasonable placement of each styles and visual aesthetic feeling.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Barnes, C., Shechtman, E., Finkelstein, A., et al.: PatchMatch: a randomized correspondence algorithm for structural image editing. ACM Trans. Graph.-TOG 28(3), 24 (2009)

Johnson, J., Alahi, A., Fei-Fei, L.: Perceptual losses for real-time style transfer and super-resolution. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9906, pp. 694–711. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46475-6_43

Ulyanov, D., Lebedev, V., Lempitsky, V.: Texture networks: feed-forward synthesis of textures and stylized images. In: International Conference on Machine Learning, pp. 1349–1357 (2016)

Prisma Labs: Prisma: turn memories into art using artificial intelligence (2018). https://prisma-ai.com

Champandard, A.J.: Deep forger: paint photos in the style of famous artists (2018). https://deepforger.com/

Gatys, L.A., Ecker, A.S., Bethge, M.: Image style transfer using convolutional neural networks. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2414–2423. IEEE (2016)

Li, Y., Wang, N., Liu, J., et al.: Demystifying neural style transfer. In: Proceedings of the 26th International Joint Conference on Artificial Intelligence, pp. 2230–2236. AAAI Press (2017)

Wilmot, P., Risser, E., Barnes, C.: Stable and controllable neural texture synthesis and style transfer using histogram losses. ar**v preprint ar**v:1701.08893 (2017)

Gatys, L.A., Ecker, A.S., Bethge, M., et al.: Controlling perceptual factors in neural style transfer. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3985–3993 (2017)

Liu, X.C., Cheng, M.M., Lai, Y.K., et al.: Depth-aware neural style transfer. In: Proceedings of the Symposium on Non-Photorealistic Animation and Rendering, p. 4. ACM (2017)

Li, C., Wand, M.: Combining Markov random fields and convolutional neural networks for image synthesis. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2479–2486 (2016)

Yosinski, J., Clune, J., Fuchs, T., et al.: Understanding neural networks through deep visualization. In: ICML Workshop on Deep Learning (2015)

Gatys, L., Ecker, A.S., Bethge, M.: Texture synthesis using convolutional neural networks. In: Advances in Neural Information Processing Systems, pp. 262–270 (2015)

He, K., Sun, J., Tang, X.: Guided image filtering. In: Daniilidis, K., Maragos, P., Paragios, N. (eds.) ECCV 2010. LNCS, vol. 6311, pp. 1–14. Springer, Heidelberg (2010). https://doi.org/10.1007/978-3-642-15549-9_1

Liao, J., Yao, Y., Yuan, L., et al.: Visual attribute transfer through deep image analogy. ACM Trans. Graph. (TOG) 36(4), 120 (2017)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. ar**v preprint ar**v:1409.1556 (2014)

Champandard, A.J.: Semantic style transfer and turning two-bit doodles into fine artworks. ar**v preprint ar**v:1603.01768 (2016)

Fišer, J., Jamriška, O., Lukáč, M., et al.: StyLit: illumination-guided example-based stylization of 3D renderings. ACM Trans. Graph. (TOG) 35(4), 92 (2016)

Heckbert, P.S.: Adaptive radiosity textures for bidirectional ray tracing. ACM SIGGRAPH Comput. Graph. 24(4), 145–154 (1990)

Acknowledgement

This work was supported in part by the National Key Research and Development Program of China (No. 2017YFB1302200) and key project of Shaanxi province (No. 2018ZDCXL-GY-06-07).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Yu, J., **, L., Chen, J., Tian, Z., Lan, X. (2019). Multi-scale Neural Style Transfer Based on Deep Semantic Matching. In: Sun, F., Liu, H., Hu, D. (eds) Cognitive Systems and Signal Processing. ICCSIP 2018. Communications in Computer and Information Science, vol 1006. Springer, Singapore. https://doi.org/10.1007/978-981-13-7986-4_17

Download citation

DOI: https://doi.org/10.1007/978-981-13-7986-4_17

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-13-7985-7

Online ISBN: 978-981-13-7986-4

eBook Packages: Computer ScienceComputer Science (R0)