Abstract

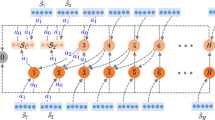

Future AGIs will need to solve large reinforcement-learning problems involving complex reward functions having multiple reward sources. One way to make progress on such problems is to decompose them into smaller regions that can be solved efficiently. We introduce a novel modular version of Least Squares Policy Iteration (LSPI), called M-LSPI, which 1. breaks up Markov decision problems (MDPs) into a set of mutually exclusive regions; 2. iteratively solves each region by a single matrix inversion and then combines the solutions by value iteration. The resulting algorithm leverages regional decomposition to efficiently solve the MDP. As the number of states increases, on both structured and unstructured MDPs, M-LSPI yields substantial improvements over traditional algorithms in terms of time to convergence to the value function of the optimal policy, especially as the discount factor approaches one.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Sutton, R.S., Barto, A.G.: Reinforcement learning: An introduction. Cambridge Univ. Press (1998)

Bellman, R.: Dynamic Prog. Princeton University Press, Princeton (1957)

Howard, R.A.: Dynamic Programming and Markov Processes. MIT Press, Cambridge (1960)

Moore, A.W., Atkeson, C.G.: Prioritized swee**: Reinforcement learning with less data and less time. Machine Learning 13, 103–130 (1993)

Dai, P., Hansen, E.A.: Prioritizing bellman backups without a priority queue. In: ICAPS, pp. 113–119 (2007)

Wingate, D., Seppi, K.D.: Prioritization methods for accelerating mdp solvers. Journal of Machine Learning Research 6, 851–881 (2005)

Lagoudakis, M.G., Parr, R.: Least-squares policy iteration. The Journal of Machine Learning Research 4, 1107–1149 (2003)

Gisslén, L., Luciw, M., Graziano, V., Schmidhuber, J.: Sequential Constant Size Compressors for Reinforcement Learning. In: Schmidhuber, J., Thórisson, K.R., Looks, M. (eds.) AGI 2011. LNCS, vol. 6830, pp. 31–40. Springer, Heidelberg (2011)

Bersekas, D.P.: Dynamic Programming: Deterministic and Stochastic Models. Prentice-Hall, Englewood Cliffs (1987)

Ring, M.B.: Continual learning in reinforcement environments. PhD thesis, University of Texas at Austin (1994)

D’Epenoux, F.: A probabilistic production and inventory problem. Management Science 10, 98–108 (1993)

Littman, M.L., Dean, T.L., Kaelbling, L.P.: On the complexity of solving Markov decision problems. In: Proceedings of the Eleventh Annual Conference on Uncertainty in Artificial Intelligence, UAI 1995, pp. 394–402. Morgan Kauffman, San Francisco (1995)

Kaelbling, L.P.: Hierarchical learning in stochastic domains: Preliminary results. In: Proceedings of the Tenth International Conference on Machine Learning, pp. 167–173. Citeseer (1993)

Biemann, C.: Chinese whispers. In: Workshop on TextGraphs, at HLT-NAACL, pp. 73–80. Association for Computational Linguistics (2006)

Bullmore, E., Sporns, O.: Complex brain networks: graph theoretical analysis of structural and functional systems. Nature Reviews Neuroscience 10(3), 186–198 (2009)

Tikhanoff, V., Cangelosi, A., Fitzpatrick, P., Metta, G., Natale, L., Nori, F.: An open-source simulator for cognitive robotics research: The prototype of the icub humanoid robot simulator (2008)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Gisslen, L., Ring, M., Luciw, M., Schmidhuber, J. (2012). Modular Value Iteration through Regional Decomposition. In: Bach, J., Goertzel, B., Iklé, M. (eds) Artificial General Intelligence. AGI 2012. Lecture Notes in Computer Science(), vol 7716. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-35506-6_8

Download citation

DOI: https://doi.org/10.1007/978-3-642-35506-6_8

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-35505-9

Online ISBN: 978-3-642-35506-6

eBook Packages: Computer ScienceComputer Science (R0)