Abstract

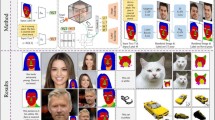

Over the years, 2D GANs have achieved great successes in photorealistic portrait generation. However, they lack 3D understanding in the generation process, thus they suffer from multi-view inconsistency problem. To alleviate the issue, many 3D-aware GANs have been proposed and shown notable results, but 3D GANs struggle with editing semantic attributes. The controllability and interpretability of 3D GANs have not been much explored. In this work, we propose two solutions to overcome these weaknesses of 2D GANs and 3D-aware GANs. We first introduce a novel 3D-aware GAN, SURF-GAN, which is capable of discovering semantic attributes during training and controlling them in an unsupervised manner. After that, we inject the prior of SURF-GAN into StyleGAN to obtain a high-fidelity 3D-controllable generator. Unlike existing latent-based methods allowing implicit pose control, the proposed 3D-controllable StyleGAN enables explicit pose control over portrait generation. This distillation allows direct compatibility between 3D control and many StyleGAN-based techniques (e.g., inversion and stylization), and also brings an advantage in terms of computational resources. Our codes are available at https://github.com/jgkwak95/SURF-GAN.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Abdal, R., Qin, Y., Wonka, P.: Image2StyleGAN: how to embed images into the StyleGAN latent space? In: Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Abdal, R., Qin, Y., Wonka, P.: Image2StyleGAN++: how to edit the embedded images? In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Abdal, R., Zhu, P., Mitra, N.J., Wonka, P.: StyleFlow: Attribute-conditioned exploration of StyleGAN-generated images using conditional continuous normalizing flows. ACM Trans. Graph. 40(3), 1–21 (2021)

Alaluf, Y., Patashnik, O., Cohen-Or, D.: ReStyle: a residual-based StyleGAN encoder via iterative refinement. In: International Conference on Computer Vision (ICCV) (2021)

Alaluf, Y., Tov, O., Mokady, R., Gal, R., Bermano, A.H.: HyperStyle: StyleGAN inversion with hypernetworks for real image editing (2021)

Brock, A., Donahue, J., Simonyan, K.: Large scale GAN training for high fidelity natural image synthesis. In: International Conference on Learning Representations (ICLR) (2019)

Chan, E.R., et al.: Efficient geometry-aware 3D generative adversarial networks. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2022)

Chan, E.R., Monteiro, M., Kellnhofer, P., Wu, J., Wetzstein, G.: Pi-GAN: periodic implicit generative adversarial networks for 3D-aware image synthesis. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2021)

Chen, A., Liu, R., **e, L., Chen, Z., Su, H., Yu, J.: SofGAN: a portrait image generator with dynamic styling. ACM Trans. Graph. 41(1), 1–26 (2022)

Chen, X., Duan, Y., Houthooft, R., Schulman, J., Sutskever, I., Abbeel, P.: InfoGAN: interpretable representation learning by information maximizing generative adversarial nets. In: Advances in Neural Information Processing Systems (NeurIPS) (2016)

Deng, J., Guo, J., Xue, N., Zafeiriou, S.: ArcFace: additive angular margin loss for deep face recognition. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Deng, Y., Yang, J., Chen, D., Wen, F., Tong, X.: Disentangled and controllable face image generation via 3D imitative-contrastive learning. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Deng, Y., Yang, J., **ang, J., Tong, X.: GRAM: generative radiance manifolds for 3D-aware image generation. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2022)

Gadelha, M., Maji, S., Wang, R.: 3D shape induction from 2D views of multiple objects. In: International Conference on 3D Vision (3DV) (2017)

Goodfellow, I., et al.: Generative adversarial nets. In: Advances in Neural Information Processing Systems (NeurIPS) (2014)

Gu, J., Liu, L., Wang, P., Theobalt, C.: StyleNeRF: a style-based 3D-aware generator for high-resolution image synthesis (2021)

Härkönen, E., Hertzmann, A., Lehtinen, J., Paris, S.: GANSpace: discovering interpretable GAN controls (2020)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2016)

He, Z., Kan, M., Shan, S.: EigenGAN: layer-wise eigen-learning for GANs. In: International Conference on Computer Vision (ICCV) (2021)

Henzler, P., Mitra, N.J., Ritschel, T.: Esca** Plato’s cave: 3D shape from adversarial rendering. In: International Conference on Computer Vision (ICCV) (2019)

Hu, Y., Wu, X., Yu, B., He, R., Sun, Z.: Pose-guided photorealistic face rotation. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Jeon, I., Lee, W., Pyeon, M., Kim, G.: IB-GAN: disengangled representation learning with information bottleneck generative adversarial networks. In: AAAI Conference on Artificial Intelligence (AAAI) (2021)

Kajiya, J.T., Von Herzen, B.P.: Ray tracing volume densities. SIGGRAPH (1984)

Kaneko, T., Hiramatsu, K., Kashino, K.: Generative attribute controller with conditional filtered generative adversarial networks. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Karras, T., Aila, T., Laine, S., Lehtinen, J.: Progressive growing of GANs for improved quality, stability, and variation. In: International Conference on Learning Representations (ICLR) (2018)

Karras, T., Aittala, M., Hellsten, J., Laine, S., Lehtinen, J., Aila, T.: Training generative adversarial networks with limited data. In: Advances in Neural Information Processing Systems (NeurIPS) (2020)

Karras, T., et al.: Alias-free generative adversarial networks. In: Advances in Neural Information Processing Systems (NeurIPS) (2021)

Karras, T., Laine, S., Aila, T.: A style-based generator architecture for generative adversarial networks. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Karras, T., Laine, S., Aittala, M., Hellsten, J., Lehtinen, J., Aila, T.: Analyzing and improving the image quality of StyleGAN. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Kowalski, M., Garbin, S.J., Estellers, V., Baltrušaitis, T., Johnson, M., Shotton, J.: CONFIG: controllable neural face image generation. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12356, pp. 299–315. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58621-8_18

Kwak, J.G., Li, Y., Yoon, D., Han, D., Ko, H.: Generate and edit your own character in a canonical view (2022)

Lee, W., Kim, D., Hong, S., Lee, H.: High-fidelity synthesis with disentangled representation. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12371, pp. 157–174. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58574-7_10

Liao, Y., Schwarz, K., Mescheder, L., Geiger, A.: Towards unsupervised learning of generative models for 3D controllable image synthesis. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Liu, Z., Luo, P., Wang, X., Tang, X.: Deep learning face attributes in the wild. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2015)

Lunz, S., Li, Y., Fitzgibbon, A., Kushman, N.: Inverse graphics GAN: learning to generate 3D shapes from unstructured 2D data (2020)

Mildenhall, B., Srinivasan, P.P., Tancik, M., Barron, J.T., Ramamoorthi, R., Ng, R.: NeRF: representing scenes as neural radiance fields for view synthesis. In: European Conference on Computer Vision (ECCV) (2020)

Nguyen-Phuoc, T., Li, C., Theis, L., Richardt, C., Yang, Y.L.: HoloGAN: unsupervised learning of 3D representations from natural images. In: International Conference on Computer Vision (ICCV) (2019)

Nguyen-Phuoc, T., Richardt, C., Mai, L., Yang, Y.L., Mitra, N.: BlockGAN: learning 3D object-aware scene representations from unlabelled images. In: Advances in Neural Information Processing Systems (NeurIPS) (2020)

Niemeyer, M., Geiger, A.: GIRAFFE: representing scenes as compositional generative neural feature fields. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2021)

Nitzan, Y., Gal, R., Brenner, O., Cohen-Or, D.: LARGE: latent-based regression through GAN semantics (2021)

Or-El, R., Luo, X., Shan, M., Shechtman, E., Park, J.J., Kemelmacher-Shlizerman, I.: StyleSDF: high-resolution 3D-consistent image and geometry generation. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2022)

Pan, X., Dai, B., Liu, Z., Loy, C.C., Luo, P.: Do 2D GANs know 3D shape? Unsupervised 3D shape reconstruction from 2D image GANs. In: International Conference on Learning Representations (ICLR) (2021)

Patashnik, O., Wu, Z., Shechtman, E., Cohen-Or, D., Lischinski, D.: StyleCLIP: text-driven manipulation of StyleGAN imagery. In: International Conference on Computer Vision (ICCV) (2021)

Perez, E., Strub, F., de Vries, H., Dumoulin, V., Courville, A.C.: FiLM: visual reasoning with a general conditioning layer. In: AAAI Conference on Artificial Intelligence (AAAI) (2018)

Pinkney, J.N., Adler, D.: Resolution dependent GAN interpolation for controllable image synthesis between domains (2020)

Radford, A., Metz, L., Chintala, S.: Unsupervised representation learning with deep convolutional generative adversarial networks (2015)

Richardson, E., et al.: Encoding in style: a StyleGAN encoder for image-to-image translation. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2021)

Roich, D., Mokady, R., Bermano, A.H., Cohen-Or, D.: Pivotal tuning for latent-based editing of real images (2021)

Schwarz, K., Liao, Y., Niemeyer, M., Geiger, A.: GRAF: generative radiance fields for 3D-aware image synthesis. In: Advances in Neural Information Processing Systems (NeurIPS) (2020)

Shen, Y., Gu, J., Tang, X., Zhou, B.: Interpreting the latent space of GANs for semantic face editing. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Shen, Y., Zhou, B.: Closed-form factorization of latent semantics in GANs. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2021)

Shi, Y., Aggarwal, D., Jain, A.K.: Lifting 2D StyleGAN for 3D-aware face generation. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2021)

Shoshan, A., Bhonker, N., Kviatkovsky, I., Medioni, G.: GAN-control: explicitly controllable GANs. In: International Conference on Computer Vision (ICCV) (2021)

Sitzmann, V., Martel, J., Bergman, A., Lindell, D., Wetzstein, G.: Implicit neural representations with periodic activation functions. In: Advances in Neural Information Processing Systems (NeurIPS) (2020)

Sun, J., et al.: FENeRF: face editing in neural radiance fields (2021)

Szabó, A., Meishvili, G., Favaro, P.: Unsupervised generative 3D shape learning from natural images (2019)

Tewari, A., et al.: PIE: portrait image embedding for semantic control. ACM Trans. Graph. 39(6), 1–14 (2020)

Tewari, A., et al.: StyleRig: rigging StyleGAN for 3D control over portrait images. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Tov, O., Alaluf, Y., Nitzan, Y., Patashnik, O., Cohen-Or, D.: Designing an encoder for StyleGAN image manipulation. ACM Trans. Graph. 40(4), 1–14 (2021)

Xue, Y., Li, Y., Singh, K.K., Lee, Y.J.: GIRAFFE HD: a high-resolution 3D-aware generative model. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2022)

Yao, X., Newson, A., Gousseau, Y., Hellier, P.: A latent transformer for disentangled face editing in images and videos. In: International Conference on Computer Vision (ICCV) (2021)

Yin, X., Yu, X., Sohn, K., Liu, X., Chandraker, M.: Towards large-pose face frontalization in the wild. In: International Conference on Computer Vision (ICCV) (2017)

Zhang, R., Isola, P., Efros, A.A., Shechtman, E., Wang, O.: The unreasonable effectiveness of deep features as a perceptual metric. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Zhang, Y., et al.: Image GANs meet differentiable rendering for inverse graphics and interpretable 3D neural rendering (2020)

Zhou, H., Liu, J., Liu, Z., Liu, Y., Wang, X.: Rotate-and-render: unsupervised photorealistic face rotation from single-view images. In: Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Zhou, P., **e, L., Ni, B., Tian, Q.: CIPS-3D: a 3D-aware generator of GANs based on conditionally-independent pixel synthesis (2021)

Zhu, J., Shen, Y., Zhao, D., Zhou, B.: In-domain GAN inversion for real image editing. In: European Conference on Computer Vision (ECCV) (2020)

Zhu, J.Y., et al.: Visual object networks: image generation with disentangled 3D representations. In: Advances in Neural Information Processing Systems (NeurIPS) (2018)

Zhu, X., Liu, X., Lei, Z., Li, S.Z.: Face alignment in full pose range: a 3D total solution. Transactions on Pattern Analysis and Machine Intelligence (TPAMI) (2017)

Acknowledgement

This work was supported by DMLab. We also thank to Anonymous ECCV Reviewers for their constructive suggestions and discussions on our paper.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Kwak, Jg., Li, Y., Yoon, D., Kim, D., Han, D., Ko, H. (2022). Injecting 3D Perception of Controllable NeRF-GAN into StyleGAN for Editable Portrait Image Synthesis. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds) Computer Vision – ECCV 2022. ECCV 2022. Lecture Notes in Computer Science, vol 13677. Springer, Cham. https://doi.org/10.1007/978-3-031-19790-1_15

Download citation

DOI: https://doi.org/10.1007/978-3-031-19790-1_15

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19789-5

Online ISBN: 978-3-031-19790-1

eBook Packages: Computer ScienceComputer Science (R0)