Abstract

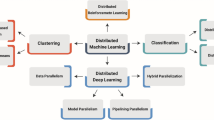

A distributed system is a set of logical or physical units capable of performing calculations and communicating with each other. Nowadays, these systems are at the heart of technologies such as the Internet of Things IoT, the Internet of Vehicles IoV, etc. These systems collect data, perform calculations and make decisions. On the other hand, deep learning (DL) has led to enormous progress in the field of artificial intelligence. Since the precision of DL to form a set of reference data on a single machine is known, it becomes more interesting to form several models and distribute the intelligence over the different nodes of the system by different calculation strategies.

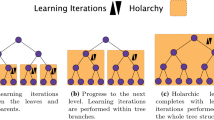

In this paper we propose a method using deep neural networks on several machines by distributing the dataset before starting the training, ensuring communication between them in order to improve the calculation time and accuracy.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Dahl, G., Yu, D., Deng, L., Acero, A.: Context-dependent pre-trained deep neural networks for large vocabulary speech recognition. IEEE Trans. Audio Speech Lang. Process. 20, 30–42 (2012)

Hinton, G., et al.: Deep neural networks for acoustic modeling in speech recognition. IEEE Sig. Process. Mag. 29, 82–97 (2012)

Ciresan, D.C., Meier, U., Gambardella, L.M., Schmidhuber, J.: Deep big simple neural nets excel on handwritten digit recognition. CoRR (2010)

Coates, A., Lee, H., Ng, A.Y.: An analysis of single-layer networks in unsupervised feature learning. In: AISTATS 2011 (2011)

Bengio, Y., Ducharme, R., Vincent, P., Jauvin, C.: A neural probabilistic language model. J. Mach. Learn. Res. 3, 1137–1155 (2003)

Collobert, R., Weston, J.: A unified architecture for natural language processing: deep neural networks with multitask learning. In: ICML 2008 (2008)

Le, Q.V., Ngiam, J., Coates, A., Lahiri, A., Prochnow, B., Ng, A.Y.: On optimization methods for deep learning. In: ICML 2011 (2011)

Raina, R., Madhavan, A., Ng, A.Y.: On optimization methods for deep learning. In: ICML 2009 (2009)

Martens, J.: Deep learning via hessian-free optimization. In: ICML 2010 (2010)

Bergstra, J., et al.: Theano : a CPU and GPU math expression compiler. In: SciPy 2010 (2010)

Ciresan, D., Meier, U., Schmidhuber, J.: Multi-column deep neural networks for image classification. Technical report. In: IDSIA 2012 (2012)

Shi, Q., et al.: Hash kernels. In: AISTATS 2009 (2009)

Langford, J., Smola, A., Zinkevich, M.: Slow learners are fast. In: NIPS 2009 (2009)

Mann, G., McDonald, R., Mohri, M., Silberman, N., Walker, D.: Efficient large-scale distributed training of conditional maximum entropy models. In: NIPS 2009 (2009)

LeCun, Y., Cortes, C.: The MNIST database of handwritten digits. In: NIPS 1998 (1998 ). yann.lecun.com/exdb/mnist/

Li, S., et al.: PyTorch distributed: experiences on accelerating data parallel trainings. In: VLDB 2020 (2020)

He, M.L.Z., Rahayu, W., Xue, Y.: Distributed training of deep learning models: a taxonomic perspective. IEEE Trans. Parallel Distrib. Syst. 31(12), 2802–2818 (2020)

Mayer, R., Jacobsen, H.-A.: Distributed training of deep learning models: scalable deep learning on distributed infrastructures: challenges, techniques and tools. ACM Comput. Surv. 53, 1–37 (2019)

Grinberg, M.: Flask Web Development: Develo** Web Applications with Python. O’Reilly Media, Inc. (2018)

Merkel, D.: Docker: lightweight Linux containers for consistent development and deployment. Linux J. 2014(239), 2 (2014)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Dahaoui, I., Mosbah, M., Zemmari, A. (2022). Distributed Training from Multi-sourced Data. In: Barolli, L., Hussain, F., Enokido, T. (eds) Advanced Information Networking and Applications. AINA 2022. Lecture Notes in Networks and Systems, vol 450. Springer, Cham. https://doi.org/10.1007/978-3-030-99587-4_29

Download citation

DOI: https://doi.org/10.1007/978-3-030-99587-4_29

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-99586-7

Online ISBN: 978-3-030-99587-4

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)