Abstract

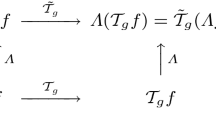

This paper presents a hybrid approach between scale-space theory and deep learning, where a deep learning architecture is constructed by coupling parameterized scale-space operations in cascade. By sharing the learnt parameters between multiple scale channels, and by using the transformation properties of the scale-space primitives under scaling transformations, the resulting network becomes provably scale covariant. By in addition performing max pooling over the multiple scale channels, a resulting network architecture for image classification also becomes provably scale invariant. We investigate the performance of such networks on the MNISTLargeScale dataset, which contains rescaled images from original MNIST over a factor of 4 concerning training data and over a factor of 16 concerning testing data. It is demonstrated that the resulting approach allows for scale generalization, enabling good performance for classifying patterns at scales not present in the training data.

The support from the Swedish Research Council (contract 2018-03586) is gratefully acknowledged.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Jansson, Y., Lindeberg, T.: Exploring the ability of CNNs to generalise to previously unseen scales over wide scale ranges. In: International Conference on Pattern Recognition (ICPR 2020), pp. 1181–1188 (2021)

Lindeberg, T.: Provably scale-covariant continuous hierarchical networks based on scale-normalized differential expressions coupled in cascade. J. Math. Imaging Vis. 62, 120–148 (2020)

Lindeberg, T.: Feature detection with automatic scale selection. Int. J. Comput. Vis. 30, 77–116 (1998)

Lindeberg, T.: Edge detection and ridge detection with automatic scale selection. Int. J. Comput. Vis. 30, 117–154 (1998)

Mikolajczyk, K., Schmid, C.: Scale and affine invariant interest point detectors. Int. J. Comput. Vis. 60, 63–86 (2004)

Lowe, D.G.: Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 60, 91–110 (2004)

Bay, H., Ess, A., Tuytelaars, T., van Gool, L.: Speeded up robust features (SURF). CVIU 110, 346–359 (2008)

Lindeberg, T.: Image matching using generalized scale-space interest points. J. Math. Imaging Vis. 52, 3–36 (2015)

Fawzi, A., Frossard, P.: Manitest: are classifiers really invariant? In: British Machine Vision Conference (BMVC 2015) (2015)

Singh, B., Davis, L.S.: An analysis of scale invariance in object detection – SNIP. In: Proceedings Computer Vision and Pattern Recognition (CVPR 2018), pp. 3578–3587 (2018)

Xu, Y., **ao, T., Zhang, J., Yang, K., Zhang, Z.: Scale-invariant convolutional neural networks. ar**v preprint ar**v:1411.6369 (2014)

Kanazawa, A., Sharma, A., Jacobs, D.W.: Locally scale-invariant convolutional neural networks. ar**v preprint ar**v:1412.5104 (2014)

Marcos, D., Kellenberger, B., Lobry, S., Tuia, D.: Scale equivariance in CNNs with vector fields. ar**v preprint ar**v:1807.11783 (2018)

Ghosh, R., Gupta, A.K.: Scale steerable filters for locally scale-invariant convolutional neural networks. ar**v preprint ar**v:1906.03861 (2019)

Worrall, D., Welling, M.: Deep scale-spaces: equivariance over scale. In: Advances in Neural Information Processing Systems, pp. 7366–7378 (2019)

Jaderberg, M., Simonyan, K., Zisserman, A., Kavukcuoglu, K.: Spatial transformer networks. In: Proceedings of Neural Information Processing Systems (NIPS 2015), pp. 2017–2025 (2015)

Finnveden, L., Jansson, Y., Lindeberg, T.: Understanding when spatial transformer networks do not support invariance, and what to do about it. In: International Conference on Pattern Recognition (ICPR 2020), pp. 3427–3434 (2021)

Roux, N.L., Bengio, Y.: Continuous neural networks. In: Artificial Intelligence and Statistics (AISTATS 2007), vol. 2, pp. 404–411 (2007)

Shocher, A., Feinstein, B., Haim, N., Irani, M.: From discrete to continuous convolution layers. ar**v preprint ar**v:2006.11120 (2020)

Iijima, T.: Basic theory on normalization of pattern (in case of typical one-dimensional pattern). Bull. Electrotech. Lab. 26, 368–388 (1962)

Koenderink, J.J.: The structure of images. Biol. Cybern. 50, 363–370 (1984)

Koenderink, J.J., van Doorn, A.J.: Generic neighborhood operators. IEEE-TPAMI 14, 597–605 (1992)

Lindeberg, T.: Scale-Space Theory in Computer Vision. Springer, New York (1993). 10.1007/978-1-4757-6465-9

Florack, L.M.J.: Image Structure. Springer, Dordrecht (1997). 10.1007/978-94-015-8845-4

ter Haar Romeny, B.: Front-End Vision and Multi-Scale Image Analysis. Springer, Dordrecht (2003). 10.1007/978-1-4020-8840-7

Lindeberg, T.: Generalized Gaussian scale-space axiomatics comprising linear scale-space, affine scale-space and spatio-temporal scale-space. J. Math. Imaging Vis. 40, 36–81 (2011)

Lindeberg, T.: A computational theory of visual receptive fields. Biol. Cybern. 107, 589–635 (2013)

Jacobsen, J.J., van Gemert, J., Lou, Z., Smeulders, A.W.M.: Structured receptive fields in CNNs. In: Proceedings of Computer Vision and Pattern Recognition (CVPR 2016), pp. 2610–2619 (2016)

LeCun, Y., Bottou, L., Bengio, Y., Haffner, P.: Gradient-based learning applied to document recognition. Proc. IEEE 86, 2278–2324 (1998)

Kingma, P.D., Ba, J.: Adam: a method for stochastic optimization. In: International Conference for Learning Representations (ICLR 2015) (2015)

Lindeberg, T.: Scale-space for discrete signals. IEEE-TPAMI 12, 234–254 (1990)

Lindeberg, T.: Discrete derivative approximations with scale-space properties: a basis for low-level feature extraction. J. Math. Imaging Vis. 3, 349–376 (1993)

Jansson, Y., Lindeberg, T.: MNISTLargeScaledataset. Zenodo (2020)

Loog, M., Li, Y., Tax, D.M.J.: Maximum membership scale selection. In: Benediktsson, J.A., Kittler, J., Roli, F. (eds.) MCS 2009. LNCS, vol. 5519, pp. 468–477. Springer, Heidelberg (2009). https://doi.org/10.1007/978-3-642-02326-2_47

Li, Y., Tax, D.M.J., Loog, M.: Scale selection for supervised image segmentation. Image Vis. Comput. 30, 991–1003 (2012)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Lindeberg, T. (2021). Scale-Covariant and Scale-Invariant Gaussian Derivative Networks. In: Elmoataz, A., Fadili, J., Quéau, Y., Rabin, J., Simon, L. (eds) Scale Space and Variational Methods in Computer Vision. SSVM 2021. Lecture Notes in Computer Science(), vol 12679. Springer, Cham. https://doi.org/10.1007/978-3-030-75549-2_1

Download citation

DOI: https://doi.org/10.1007/978-3-030-75549-2_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-75548-5

Online ISBN: 978-3-030-75549-2

eBook Packages: Computer ScienceComputer Science (R0)