Abstract

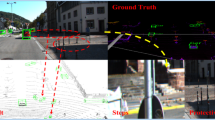

To ameliorate the problems of disorder, sparseness, and floating occur for 3D LiDAR point cloud in the road environment, we propose a novel deep CNN architecture for real-time point cloud features extraction. Specifically, we first code the 3D position of point cloud by the index of vertical and horizontal directions. In this way, the 3D point cloud can be converted into a multi-channel point feature map. Then, through multi-level features extraction and fusion of the point feature map, the semantic segmentation of the point cloud scene is finally realized. Comprehensive experiments and ablation studies on public available point cloud datasets demonstrate the superiority of our approach. More importantly, our approach has been successfully applied to the perception of the real-world self-driving system. The source code has been made public available at: https://github.com/Lab1028-19/A-Novel-CNN.

This work was supported by the National Natural Science Foundation of China (No. 61976116, 61773117), Fundamental Research Funds for the Central Universities (No. 30920021135), and the Primary Research & Development Plan of Jiangsu Province - Industry Prospects and Common Key Technologies (No. BE2017157).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Yao, Y., et al.: Towards automatic construction of diverse, high-quality image dataset. IEEE Trans. Knowl. Data Eng. 32(6), 1199–1211 (2020)

Lu, J., et al.: HSI road: a hyper spectral image dataset for road segmentation, vol. 1–6 (2020)

Hua, X., et al.: A new web-supervised method for image dataset constructions. Neurocomputing 236, 23–31 (2017)

Yao, Y., et al.: Exploiting web images for dataset construction: a domain robust approach. IEEE Trans. Multimed. 19(8), 1771–1784 (2017)

Zhang, J., et al.: Extracting visual knowledge from the internet: making sense of image data, vol. 862–873 (2016)

Shen, F., et al.: Automatic image dataset construction with multiple textual metadata, vol. 1–6 (2016)

Yao, Y., et al.: A domain robust approach for image dataset construction. In: ACM International conference on Multimedia, pp. 212–216 (2016)

Yao, Y., et al.: Bridging the web data and fine-grained visual recognition via alleviating label noise and domain mismatch. In: ACM International Conference on Multimedia (2020)

Sun, Z., et al.: CRSSC: salvage reusable samples from noisy data for robust learning. In: ACM International Conference on Multimedia (2020)

Zhang, C., et al.: Data-driven meta-set based fine-grained visual recognition. In: ACM International Conference on Multimedia (2020)

Liu, H., et al.: Road segmentation with image-LiDAR data fusion in deep neural network. Multimed. Tools Appl. (2019)

Han, X., et al.: Deep representation learning for road detection using siamese network. Multimed. Tools Appl. (2019)

Zhou, T., et al.: Motion-attentive transition for zero-shot video object segmentation. In: AAAI Conference on Artificial Intelligence (2020)

Luo, H., et al.: SegEQA: video segmentation based visual attention for embodied question answering. In: IEEE Conference on Computer Vision, pp. 9667–9676 (2019)

Wang, W., et al.: Target-aware adaptive tracking for unsupervised video object segmentation. In: The DAVIS Challenge on Video Object Segmentation on CVPR Workshop (2020)

Kirschner, U.: Urban transdisciplinary co-study in a cooperative multicultural working project. In: Luo, Y. (ed.) CDVE 2018. LNCS, vol. 11151, pp. 145–152. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-00560-3_20

Yao, Y., et al.: Exploiting web images for multi-output classification: from category to subcategories. IEEE Trans. Neural Netw. Learn. Syst. 31(7), 2348–2360 (2020)

Xu, M., et al.: Deep learning for person reidentification using support vector machines. Adv. Multimed. (2017)

Gu, Y., et al.: Clustering-driven unsupervised deep hashing for image retrieval. Neurocomputing 368, 114–123 (2019)

Wang, W., et al.: Set and rebase: determining the semantic graph connectivity for unsupervised cross modal hashing. In: International Joint Conference on Artificial Intelligence, pp. 853–859 (2020)

Hu, B., et al.: PyRetri: a PyTorch-based library for unsupervised image retrieval by deep convolutional neural networks. ar**v (2020)

Zhang, C., et al.: Web-supervised network with softly update-drop training for fine-grained visual classification. In: AAAI Conference on Artificial Intelligence, pp. 12781–12788 (2020)

Yao, Y., et al.: Extracting privileged information for enhancing classifier learning. IEEE Trans. Image Process. 28(1), 436–450 (2019)

**e, G., et al.: Attentive region embedding network for zero-shot learning. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 9384–9393 (2019)

Shu, X., et al.: Hierarchical long short-term concurrent memory for human interaction recognition. IEEE TPAMI (2019)

**e, G.-S., et al.: SRSC: selective, robust, and supervised constrained feature representation for image classification. IEEE Trans. Neural Netw. Learn. Syst. (2019)

Shu, X., et al.: Personalized Age Progression with Bi-level Aging Dictionary Learning. IEEE Trans. Pattern Anal. Mach. Intell. (2018)

Yao, Y., et al.: Extracting multiple visual senses for web learning. IEEE Trans. Multimed. 21(1), 184–196 (2019)

Zhang, J., et al.: Extracting privileged information from untagged corpora for classifier learning. In: International Joint Conference on Artificial Intelligence, pp. 1085–1091 (2018)

Zhang, C., et al.: Web-supervised network for fine-grained visual classification, vol. 1–6 (2020)

Chen, T., et al.: Classification constrained discriminator for domain adaptive semantic segmentation, vol. 1–6 (2020)

Yang, W., et al.: Exploiting textual and visual features for image categorization. Pattern Recogn. Lett. 117, 140–145 (2019)

Huang, P., et al.: Collaborative Representation Based Local Discriminant Projection for Feature Extraction. Digit. Signal Proc. 76, 84–93 (2018)

Zhou, S.Y., et al.: Study on method of road detection in vehicle detection and tracking system. Electron. Des. Eng. 20(2), 157–162 (2014)

Liu, Y., et al.: Unstructured road-detection algorithm based on multiple models and optimization. Gongcheng Sheji Xuebao 20(2), 157–162 (2013)

Gang, J.: Point cloud hole filling method based on SVM and space projection. Comput. Eng. 35(22), 269–271 (2009)

Bai, M., et al.: Road detection method based on graph model. Pattern Recog. Artif. Intell. 27, 655–62 (2014)

Wijesoma, W.S., et al.: Road-boundary detection and tracking using ladar sensing. IEEE Trans. Robot. Autom. 20(3), 456–464 (2004)

Guo, Q., et al.: Unstructured road detection based on two-dimensional entropy and contour features. J. Comput. Appl. (7), 56 (2013)

Zhu, X., et al.: A real-time road boundary detection algorithm based on driverless cars. Electrical, Electronics and Computer Engineering (2015)

Gong, J.W., et al.: Unstructured road recognition using self-supervised multilayer perceptron online learning algorithm. Trans. Bei**g Inst. Technol. 34(3), 261–266 (2014)

Zhou, S.Y., et al.: Road detection using support vector machine based on online learning and evaluation. In: IEEE Intelligent Vehicles Symposium, pp. 256–261 (2010)

Wang, X.B., et al.: Unstructured road detection based on support vector machine. Sci. Technol. Eng. 11, 9106–9109 (2011)

Charles, R.Q., et al.: Pointnet: deep learning on point sets for 3D classification and segmentation. In: IEEE Conference On Computer Vision and Pattern Recognition, pp. 652–660 (2017)

Huang, X., et al.: The apolloscape open dataset for autonomous driving and its application. IEEE TPAMI (2019)

Geiger, A., et al.: Are we ready for autonomous driving? the kitti vision benchmark suite. In: IEEE Conference on Computer Vision and Pattern Recognition (2012)

He, K., et al.: Deep residual learning for image recognition. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Velas, M., et al.: CNN for very fast ground segmentation in velodyne lidar data, vol. 97–103 (2018)

Sun, Z., et al.: Dynamically visual disambiguation of keyword-based image search. In: International Joint Conference on Artificial Intelligence, pp. 996–1002 (2019)

Yang, W., et al.: Discovering and Distinguishing Multiple Visual Senses for Polysemous Words. In: AAAI Conference on Artificial Intelligence, pp. 523–530 (2018)

Ding, L., et al.: Approximate kernel selection via matrix approximation. IEEE Trans. Neural Netw. Learn. Syst. PP(99), 1–11 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Fan, D., Yao, Y., Cai, Y., Shu, X., Huang, P., Yang, W. (2020). A Novel CNN Architecture for Real-Time Point Cloud Recognition in Road Environment. In: Peng, Y., et al. Pattern Recognition and Computer Vision. PRCV 2020. Lecture Notes in Computer Science(), vol 12305. Springer, Cham. https://doi.org/10.1007/978-3-030-60633-6_17

Download citation

DOI: https://doi.org/10.1007/978-3-030-60633-6_17

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-60632-9

Online ISBN: 978-3-030-60633-6

eBook Packages: Computer ScienceComputer Science (R0)