Abstract

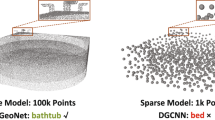

In this paper, we propose a novel deep neural network based method, called PUGeo-Net, for upsampling 3D point clouds. PUGeo-Net incorporates discrete differential geometry into deep learning elegantly by learning the first and second fundamental forms that are able to fully represent the local geometry unique up to rigid motion. Specifically, we encode the first fundamental form in a \(3\times 3\) linear transformation matrix \(\mathbf{T}\) for each input point. Such a matrix approximates the augmented Jacobian matrix of a local parameterization that encodes the intrinsic information and builds a one-to-one correspondence between the 2D parametric domain and the 3D tangent plane, so that we can lift the adaptively distributed 2D samples learned from the input to 3D space. After that, we use the learned second fundamental form to compute a normal displacement for each generated sample and project it to the curved surface. As a by-product, PUGeo-Net can compute normals for the original and generated points, which is highly desired for surface reconstruction algorithms. We evaluate PUGeo-Net on a wide range of 3D models with sharp features and rich geometric details and observe that PUGeo-Net consistently outperforms state-of-the-art methods in terms of both accuracy and efficiency for upsampling factor 4\(\sim \)16. We also verify the geometry-centric nature of PUGeo-Net quantitatively. In addition, PUGeo-Net can handle noisy and non-uniformly distributed inputs well, validating its robustness. The code is publicly available at https://github.com/ninaqy/PUGeo.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Sketchfab. https://sketchfab.com

Alexa, M., Behr, J., Cohen-Or, D., Fleishman, S., Levin, D., Silva, C.T.: Computing and rendering point set surfaces. IEEE Trans. Visual Comput. Graphics 9(1), 3–15 (2003)

Bolognesi, M., Furini, A., Russo, V., Pellegrinelli, A., Russo, P.: Testing the low-cost RPAS potential in 3D cultural heritage reconstruction. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 40, 229–235 (2015)

Campen, M., Bommes, D., Kobbelt, L.: Quantized global parametrization. ACM Trans. Graph. (TOG) 34(6), 192:1–192:12 (2015)

do Carmo, M.: Differential Geometry of Curves and Surfaces. Prentice Hall, New Jersey (1976)

Chen, X., Ma, H., Wan, J., Li, B., **a, T.: Multi-view 3D object detection network for autonomous driving. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1907–1915 (2017)

Cole, D.M., Newman, P.M.: Using laser range data for 3D SLAM in outdoor environments. In: Proceedings 2006 IEEE International Conference on Robotics and Automation (ICRA), pp. 1556–1563. IEEE (2006)

Corsini, M., Cignoni, P., Scopigno, R.: Efficient and flexible sampling with blue noise properties of triangular meshes. IEEE Trans. Visual Comput. Graphics 18(6), 914–924 (2012)

Deng, H., Birdal, T., Ilic, S.: PPFNet: global context aware local features for robust 3D point matching. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 195–205 (2018)

Fioraio, N., Konolige, K.: Realtime visual and point cloud SLAM. In: Proceedings of the RGB-D Workshop on Advanced Reasoning with Depth Cameras at Robotics: Science and Systems Conference (RSS), vol. 27 (2011)

Geiger, A., Lenz, P., Stiller, C., Urtasun, R.: Vision meets robotics: the KITTI dataset. Int. J. Robot. Res. (IJRR) 32(11), 1231–1237 (2013)

Gu, X., Yau, S.: Global conformal parameterization. In: First Eurographics Symposium on Geometry Processing, 23–25 June 2003, pp. 127–137 (2003)

Hakala, T., Suomalainen, J., Kaasalainen, S., Chen, Y.: Full waveform hyperspectral LiDAR for terrestrial laser scanning. Opt. Express 20(7), 7119–7127 (2012)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Held, R., Gupta, A., Curless, B., Agrawala, M.: 3d puppetry: a kinect-based interface for 3D animation. In: UIST, pp. 423–434. Citeseer (2012)

Hormann, K., Greiner, G.: MIPS: an efficient global parametrization method. In: Curve and Surface Design: Saint-Malo, vol. 2000, p. 10, November 2012

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7132–7141 (2018)

Huang, G., Liu, Z., Van Der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4700–4708 (2017)

Huang, H., Li, D., Zhang, H., Ascher, U., Cohen-Or, D.: Consolidation of unorganized point clouds for surface reconstruction. ACM Trans. Graph. (TOG) 28(5), 176 (2009)

Huang, H., Wu, S., Gong, M., Cohen-Or, D., Ascher, U., Zhang, H.R.: Edge-aware point set resampling. ACM Trans. Graph. (TOG) 32(1), 9 (2013)

Kazhdan, M., Hoppe, H.: Screened poisson surface reconstruction. ACM Trans. Graph. (TOG) 32(3), 29 (2013)

Kimoto, K., Asada, N., Mori, T., Hara, Y., Ohya, A., et al.: Development of small size 3D LiDAR. In: 2014 IEEE International Conference on Robotics and Automation (ICRA), pp. 4620–4626. IEEE (2014)

Komarichev, A., Zhong, Z., Hua, J.: A-CNN: annularly convolutional neural networks on point clouds. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7421–7430 (2019)

Lafarge, F., Mallet, C.: Creating large-scale city models from 3D-point clouds: a robust approach with hybrid representation. Int. J. Comput. Vision 99(1), 69–85 (2012)

Lai, W.S., Huang, J.B., Ahuja, N., Yang, M.H.: Deep Laplacian pyramid networks for fast and accurate super-resolution. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 624–632 (2017)

Landrieu, L., Simonovsky, M.: Large-scale point cloud semantic segmentation with superpoint graphs. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4558–4567 (2018)

Li, B.: 3D fully convolutional network for vehicle detection in point cloud. In: 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1513–1518. IEEE (2017)

Li, R., Li, X., Fu, C.W., Cohen-Or, D., Heng, P.A.: PU-GAN: a point cloud upsampling adversarial network. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 7203–7212 (2019)

Li, Y., Bu, R., Sun, M., Wu, W., Di, X., Chen, B.: PointCNN: convolution on x-transformed points. In: Advances in Neural Information Processing Systems, pp. 820–830 (2018)

Lipman, Y., Cohen-Or, D., Levin, D., Tal-Ezer, H.: arameterization-free projection for geometry reconstruction. ACM Trans. Graph. (TOG) 26, 22 (2007)

Maturana, D., Scherer, S.: VoxNet: a 3D convolutional neural network for real-time object recognition. In: 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 922–928. IEEE (2015)

Musialski, P., Wonka, P., Aliaga, D.G., Wimmer, M., Van Gool, L., Purgathofer, W.: A survey of urban reconstruction. In: Computer Graphics Forum, vol. 32, pp. 146–177. Wiley Online Library (2013)

Nie, S., Wang, C., Dong, P., **, X., Luo, S., Zhou, H.: Estimating leaf area index of maize using airborne discrete-return LiDAR data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 9(7), 3259–3266 (2016)

Orts-Escolano, S., et al.: Holoportation: virtual 3D teleportation in real-time. In: Proceedings of the 29th Annual Symposium on User Interface Software and Technology, pp. 741–754. ACM (2016)

Paine, J.G., Caudle, T.L., Andrews, J.R.: Shoreline and sand storage dynamics from annual airborne LIDAR surveys, Texas Gulf Coast. J. Coastal Res. 33(3), 487–506 (2016)

Preiner, R., Mattausch, O., Arikan, M., Pajarola, R., Wimmer, M.: Continuous projection for fast L1 reconstruction. ACM Trans. Graph. (TOG) 33(4), 47:1–47:13 (2014)

Qi, C.R., Su, H., Mo, K., Guibas, L.J.: PointNet: deep learning on point sets for 3D classification and segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 652–660 (2017)

Qi, C.R., Yi, L., Su, H., Guibas, L.J.: PointNet++: deep hierarchical feature learning on point sets in a metric space. In: Advances in Neural Information Processing Systems, pp. 5099–5108 (2017)

Riegler, G., Osman Ulusoy, A., Geiger, A.: OctNet: Learning deep 3D representations at high resolutions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3577–3586 (2017)

Ronneberger, O., Fischer, P., Brox, T.: U-Net: convolutional networks for biomedical image segmentation. In: Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F. (eds.) MICCAI 2015. LNCS, vol. 9351, pp. 234–241. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-24574-4_28

Santana, J.M., Wendel, J., Trujillo, A., Suárez, J.P., Simons, A., Koch, A.: Multimodal location based services—semantic 3D city data as virtual and augmented reality. In: Gartner, G., Huang, H. (eds.) Progress in Location-Based Services 2016. LNGC, pp. 329–353. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-47289-8_17

Tatarchenko, M., Park, J., Koltun, V., Zhou, Q.Y.: Tangent convolutions for dense prediction in 3D. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3887–3896 (2018)

Te, G., Hu, W., Zheng, A., Guo, Z.: RGCNN: Regularized graph CNN for point cloud segmentation. In: 2018 ACM Multimedia Conference on Multimedia Conference, pp. 746–754. ACM (2018)

Wang, Y., Wu, S., Huang, H., Cohen-Or, D., Sorkine-Hornung, O.: Patch-based progressive 3D point set upsampling. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5958–5967 (2019)

Wang, Y., Sun, Y., Liu, Z., Sarma, S.E., Bronstein, M.M., Solomon, J.M.: Dynamic graph CNN for learning on point clouds. ACM Trans. Graph. (TOG) 38(5), 146 (2019)

Wu, Z., et al.: 3D ShapeNets: a deep representation for volumetric shapes. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1912–1920 (2015)

Xu, Z., Wu, L., Shen, Y., Li, F., Wang, Q., Wang, R.: Tridimensional reconstruction applied to cultural heritage with the use of camera-equipped UAV and terrestrial laser scanner. Remote Sens. 6(11), 10413–10434 (2014)

Yang, Y., Feng, C., Shen, Y., Tian, D.: FoldingNet: point cloud auto-encoder via deep grid deformation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 206–215 (2018)

Yu, L., Li, X., Fu, C.-W., Cohen-Or, D., Heng, P.-A.: EC-Net: an edge-aware point set consolidation network. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11211, pp. 398–414. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01234-2_24

Yu, L., Li, X., Fu, C.W., Cohen-Or, D., Heng, P.A.: Pu-Net: point cloud upsampling network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2790–2799 (2018)

Yuan, W., Khot, T., Held, D., Mertz, C., Hebert, M.: PCN: point completion network. In: 2018 International Conference on 3D Vision (3DV), pp. 728–737. IEEE (2018)

Zhang, H., Goodfellow, I., Metaxas, D., Odena, A.: Self-attention generative adversarial networks. In: International Conference on Machine Learning, pp. 7354–7363 (2019)

Zhang, Y., Tian, Y., Kong, Y., Zhong, B., Fu, Y.: Residual dense network for image super-resolution. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2472–2481 (2018)

Acknowledgement

This work was supported in part by the Natural Science Foundation of China under Grant 61871342, and in part by the Hong Kong Research Grants Council under grants 9048123 (CityU 21211518) and 9042955 (CityU 11202320).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Qian, Y., Hou, J., Kwong, S., He, Y. (2020). PUGeo-Net: A Geometry-Centric Network for 3D Point Cloud Upsampling. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, JM. (eds) Computer Vision – ECCV 2020. ECCV 2020. Lecture Notes in Computer Science(), vol 12364. Springer, Cham. https://doi.org/10.1007/978-3-030-58529-7_44

Download citation

DOI: https://doi.org/10.1007/978-3-030-58529-7_44

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-58528-0

Online ISBN: 978-3-030-58529-7

eBook Packages: Computer ScienceComputer Science (R0)