Abstract

Referential communication is common in physical remote collaboration. To successfully transfer instructions, remote instructors have to refer objects in the local worker’s environment. However, it is known that nonverbal behaviors are hard to be transferred correctly through modern remote collaboration systems. We focused on enhancing verbal communication and developed an AR-based remote collaboration system. Past research has shown that annotation and labels can support communication in collaboration. Thus, we proposed combining the two functions to achieve a smooth remote collaboration. Concerning the labeling function, we improved Chang et al’s work [1] and introduced semantic virtual labels that were attached to the objects in local workers’ environment. Besides, concerning the annotation function, we introduced a 3D pointer and the instructor can easily draw workers’ attention by moving the 3D pointer. In addition, the 3D pointer can highlight the virtual labels to improve the mutual understanding between the instructors and the workers. Later, a simple experiment was conducted to evaluate the usability of the proposed system.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

Nowadays, physical remote collaboration is widely used in many places (including education, manufacturing, etc), and two participants who live far away from each other use remote collaborative tools to work together. Among all types of remote collaboration, we focused on the physical remote collaboration. In a physical remote collaboration, participants not only interact with each other but also interact with the objects in the environment. In order to refer to objects during collaboration, participants use many expressions and non-verbal (e.g. gestures, gaze) behaviors to reduce the misunderstandings.

However, it is known that nonverbal behaviors are hard to be correctly transferred through modern teleconferencing tools. Thus, referential communication could not be successfully conducted [4]; consequently, remote collaboration usually does not have the same quality as face-to-face collaboration. In this research, we focused on verbal communication and increased the efficiency of referential communication to improve the quality of remote collaboration.

There were several existing systems that aimed at improving verbal communication during the collaboration. Gauglitz [2] developed an AR system that allowed instructors to draw circles and arrows on tablets which showed the worker’s working environment. It helped participants to understand what their interlocutor was talking about. Chang et al. developed a tablets-based remote instruction system that allows instructors to give objects unique alphabetical names (e.g. A, B, C), and the given names were shown as virtual labels on both instructor and worker’s tablets [1]. By giving objects names, both participants built up a mutual understanding of how to refer to the objects during the remote instruction.

However, we considered that providing new names to each object improves communication only when the working area is narrow. In a wide working area, there are many chances that participants are not able to see the labels and it is hard for participants to remember and use the labels. We considered that providing virtual labels with semantic meanings can improve the quality of collaboration. Thus, in this paper, we first conducted a pilot experiment to understand if labels with semantic meanings support collaboration or not. Later, based on the result, we developed an AR-based remote collaboration system which supports semantic label and 3D pointer. In addition, a simple experiment for evaluation was conducted to evaluate the usability of the proposed system.

2 Pilot Experiment

2.1 Experimental Design

Hypothesis. In the pilot experiment, we investigated how participants used two types of AR labels: AR labels with unique names (Simple Label) and AR labels with detailed features describing the corresponding object (Detailed Label). We assumed that both types of AR labels supported participants building up mutual knowledge and resulted in a better quality of collaboration than remote collaboration without AR labels.

Method. This experiment is a within-participant design, and the independent variable is the label type: detailed label, simple label, and no label. In the detailed label condition, for each Lego block, we attached a virtual semi-transparent label with two or three features that can be used to describe the corresponding Lego block. The features included the color, size and shape (Fig. 1). In the simple label condition, for each Lego, a virtual semi-transparent label with a unique alphabet was attached to provide the Lego a new name (Fig. 2). The font size and label size were the same in both conditions to reduce the bias. In the no label condition, no label was shown.

Regarding the task, we chose an assembly task which is common in manufacturing as our task. We used Lego blocks which are widely used in other remote collaboration tasks. Although the assembly task of Lego blocks is not a task in the real world, it has many components which are similar to real-world task (e.g. seeking, pointing, gras**, releasing). For each task, participants had to pick up 11 Lego blocks from 16 Lego blocks and they had to assembly two shapes. One shape contained 5 Lego blocks; another shape contained 6 Lego blocks.

Participants. Two participants from University of Tsukuba were recruited to attend this experiment. Both of them were male and the ages were both 23.

Procedure. At the beginning of this experiment, the experimenter explained how to use the system. The participant played the role of the worker was asked to wear the HMD of HTC VIVE; the participant played the role of the instructor was asked to sit in front of a table with monitor (Fig. 3). Two participants were separated by a curtain. They could not see each other, but the voice can be transmitted clearly. A manual was given to the instructor. Later, the instructor was informed that he can see the worker’s working environment on the screen and he had to give instructions to the worker. On the other hand, the worker was informed he could see the real environment through the HMD and was asked to follow the instruction and assemble the corresponding Lego blocks.

The experiment consisted of four trials. A practice trial was followed by three main trials. In each trial, two participants were asked to conduct the Lego block assembly task. After each task, two participants were requested to fill in the questionnaire. At the end of the experiment, a short unstructured interview was conducted.

Measure. To assess the effect of labels, we considered two aspects: workload and quality of collaboration. Regarding workload, we chose NASA-TLX which is a standard method to assess workload. The NASA-TLX was developed by Hart and Staveland [3] to assess a person’s subjective workload. It consists of six sub-scales: mental demand, physical demand, temporal demand, performance, effort, and frustration. The NASA-TLX contains two stages: rating scales and pairwise comparison. In the rating scale stage, participants answered each sub-scales with a rating scale with 20 five-point steps, from 0 to 100 (Table 1 Q1–Q6). In the pairwise comparison stage, the participants compared each pair of sub-scales and judge which sub-scale was more important to the task.

Regarding the quality of collaboration, we adapted the questionnaire used in Chang’s research. The questions were shown in Table 1, from Q7 to Q13. In addition, we also interested in if the labels have a negative effect on visibility, so we added a question “can clearly see the screen during the experiment” (Q14). The questionnaire was a 7-point rating scale.

2.2 Result and Discussion

To calculate the score of NASA-TLX, we summed up each sub-scale’s score with their weight. The result was shown in Fig. 4. Regarding the worker side, we found that the simple label condition had the lowest workload. However, the detailed label condition had the highest workload. According to the interview, we found that it was mainly because the detailed label contained too much information and then it lowered down the visibility. Regarding the instructor side, we found that the workloads of both conditions with labels provided were lower than no label condition, and it is an evidence that providing either type of label can reduce the instructors’ workload. The reason might be because the instructor did not have to think about how to explain the objects.

On the other hand, the result of the quality of collaboration was shown in Fig. 5. Since this study is an exploratory study, no statistical analysis was performed. However, we found that the worker and instructor had a different opinion on the quality of collaboration. The worker rated the simple label condition a higher score, but the instructor rated the detailed label condition a higher score. According to the interview, the two participants also mentioned that the task was simple and the labels were often useless.

Overall, we found that both simple labels and detailed labels are possible to support remote collaborations. However, the visibility issue might cause a negative effect on collaboration.

3 Proposed System

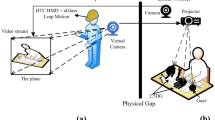

In this section, we introduced our proposed system. It is a dependent-view AR remote collaboration system while both the instructor and the worker share the same viewpoint. The architecture was shown in Fig. 6. In this system, we designed two different functions to improve the remote collaboration: virtual label and 3D pointer. The details are explained below.

3.1 Environment Capturing

In our proposed system, we used a video see-through AR instead of optical see-through AR to provide a wide view angle. We used the ZED Mini, an RGB-based stereo-camera to capture the real-world environment and transfer to Unity 3D. The resolution was \(2560\times 720\) and the frame rate of the system 60 Hz.

3.2 Virtual Label

Regarding the implementation of the function of virtual labels, we attached Aruco markers, a type of AR marker, to each object. Three video cameras were set up to capture the Aruco markers and the captured videos were streamed to the Unity 3D.

Then, in order to get blocks’ positions, we used OpenCV for Unity to estimate the position. Later, semi-transparent virtual labels were attached to the blocks’ positions to the support instructors and workers building up mutual understandings.

3.3 3D Pointer

In addition to providing virtual labels to achieve a better mutual understanding, we considered including a 3D pointer which is a metaphor of human’s finger (Fig. 7). A ball shape pointer was created with Unity 3D and augmented to the real-world environment. The instructor can move the cursor with a mouse and the worker can easily understand what the instructor is referring to.

Besides, the instructor can use the pointer to change the color of the virtual labels. This function can further support the instructor to draw the worker’s attention.

4 Evaluation Experiment

4.1 Experimental Design

Hypothesis. To evaluate the proposed system, we conducted a simple experiment. As we mentioned in Sect. 1, our system is designed for a wide area; thus, in this experiment, we asked participants to conduct assembly tasks in a wide environment. In addition, based on the result of the previous experiment, we found that visibility is an important issue. Thus, we would also like to know how the participants behave after we remove the virtual labels. We assumed that the quality of remote collaboration with the virtual labels with semantic meanings (Detailed Label) was better than the baseline (with the virtual labels with non-semantic contents) (Simple Label). Also, we assessed how remote collaboration changed after the virtual labels were removed.

Method and Participant. It was a between-participant design experiment to reduce the learning effect. We compared two types of virtual labels: the simple label and the detailed label. Other settings were similar to Sect. 2.1. The only difference is that we prepared more types of Lego blocks to increase the task’s complexity. Four male participants from the University of Tsukuba were recruited to participate in the experiment.

Procedure. After two participants entered the room, the participant played the role of the instructor sat in front of the table with a monitor and the participant played the role of the worker stood between three tables (Fig. 8). After explaining the system, participants were asked to conduct a practice task. In the practice task, several spray cans with different colors, sizes, and shapes of caps were put on the three tables. The participants followed the given manual and switched the positions of the spray cans. Later, the participants were asked to conduct two main tasks. In each main task, the participants assembled and dismantled the Lego blocks based on the manual. In the first main task, participants saw different virtual labels according to their groups; in the second main task, participants conducted the task without virtual labels. Both participants were asked to answer the questionnaire after each main task. At the end of the experiment, a brief unstructured interview was conducted.

4.2 Result and Discussion

The result of the workload was shown in Fig. 9; the result of the quality of collaboration was shown in Fig. 10. According to Q14, we found that the visibility issue was addressed. Regarding the workload, the NASA-TLX scores of both the instructor and worker were higher in the simple label condition than the detailed label condition, and it meant that both the instructor and worker experienced low workload in the detailed label condition. Regarding the quality of collaboration, we found that the effect of the virtual label differed between the instructor and the worker. The instructor rated that the simple label higher than the detailed label when the virtual labels existed, but the instructor rated oppositely after the virtual labels were removed. However, the worker did not have a big difference. Based on the interview and observation, we found another interesting finding that while the instructor in the detailed label condition was trying to describe a feature of a Lego block, he tended to create his own description based on other labels attached to other Lego blocks. However, this phenomenon did not appear in the simple label condition.

However, this was a very simple evaluation to initially test the proposed system. As future work, we should recruit enough participants to investigate the effect of virtual labels with semantic meanings. In addition, we should conduct conversational analysis to analyze how the virtual labels changed users’ behaviors.

5 Conclusion

In this paper, we demonstrated a novel AR remote collaboration system. The function of the virtual labels and 3D pointer allowed users to have smooth communication, and further improve the quality of remote collaboration.

References

Chang, Y.C., Wang, H.C., Chu, H.k., Lin, S.Y., Wang, S.P.: Alpharead: support unambiguous referencing in remote collaboration with readable object annotation. In: Proceedings of the 2017 ACM Conference on Computer Supported Cooperative Work and Social Computing, CSCW 2017, pp. 2246–2259. Association for Computing Machinery, New York (2017). https://doi.org/10.1145/2998181.2998258,

Gauglitz, S., Nuernberger, B., Turk, M., Höllerer, T.: World-stabilized annotations and virtual scene navigation for remote collaboration. In: Proceedings of the 27th Annual ACM Symposium on User Interface Software and Technology, pp. 449–459 (2014)

Hart, S.G., Staveland, L.E.: Development of NASA-TLX (task load index): results of empirical and theoretical research. Hum. Ment. Workload 1(3), 139–183 (1988)

Jones, B., Witcraft, A., Bateman, S., Neustaedter, C., Tang, A.: Mechanics of camera work in mobile video collaboration. In: Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems, CHI 2015, pp. 957–966. Association for Computing Machinery, New York (2015). https://doi.org/10.1145/2702123.2702345

Acknowledgement

This work was supported by JSPS KAKENHI Grant Number 17H01771.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Wang, TY., Sato, Y., Otsuki, M., Kuzuoka, H., Suzuki, Y. (2020). Develo** an AR Remote Collaboration System with Semantic Virtual Labels and a 3D Pointer. In: Yamamoto, S., Mori, H. (eds) Human Interface and the Management of Information. Interacting with Information. HCII 2020. Lecture Notes in Computer Science(), vol 12185. Springer, Cham. https://doi.org/10.1007/978-3-030-50017-7_30

Download citation

DOI: https://doi.org/10.1007/978-3-030-50017-7_30

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-50016-0

Online ISBN: 978-3-030-50017-7

eBook Packages: Computer ScienceComputer Science (R0)