Abstract

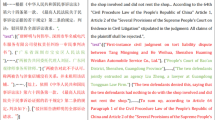

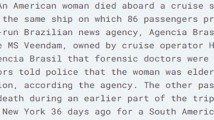

One of the limitation of automatic summarization is that how to take into account and reflect the implicit information conveyed between different text and the scene influence. In particularly, the generation of news headlines should under specific scene and topic in the field of journalism. Traditionally, Sequence-to-Sequence (Seq2Seq) with attention model has shown great success in summarization. However, Sequence-to-Sequence (Seq2Seq) with attention model is focusing on features of the text only, not on implicit information between different text and scene influence. In this work, we present a combination of techniques that harness scene information which reflects by word topic distribution to improve abstractive sentence summarization. This model combines word topic distribution of LDA topic model as an external attention mechanism to better text summarization result. This model contains an RNN network as an encoder and decoder part, encoder is used to embed original text in a low dimensional dense vector as previous works, and decoder uses attention mechanism to incorporate word-topic distribution and low dimensional dense vector of encoder. The proposed approach is evaluated by datasets of CNN/Daily Mail and citation. The result shows that it is better than the aforementioned methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Hu, X., Lin, Y., Wang, C.: Summary of automatic text summarization techniques. J. Intell. 29, 144–147 (2010)

Kupiec, J., Pedersen, J., Chen, F.: A trainable document summarizer. In: Advances in Automatic Summarization, pp. 55–60 (1999)

Lin, C.Y.: Training a selection function for extraction. In: Proceedings of the Eighth International Conference on Information and Knowledge Management, pp. 55–62. ACM (1999)

Osborne, M.: Using maximum entropy for sentence extraction. In: Proceedings of the ACL 2002 Workshop on Automatic Summarization, vol. 4, pp. 1–8. Association for Computational Linguistics (2002)

Conroy, J.M., O’leary, D.P.: Text summarization via Hidden Markov Models. In: Proceedings of the 24th Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 406–407. ACM (2001)

Rush, A.M., Chopra, S., Weston, J.: A neural attention model for abstractive sentence summarization. In: Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, pp. 379–389 (2015)

Nallapati, R., Zhou, B., dos Santos, C., et al.: Abstractive text summarization using sequence-to-sequence RNNs and beyond. In: Proceedings of the 20th SIGNLL Conference on Computational Natural Language Learning, pp. 280–290 (2016)

Kikuchi, Y., Neubig, G., Sasano, R., et al.: Controlling output length in neural encoder-decoders. In: Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, pp. 1328–1338 (2016)

Jiang, R.Y., Cui, L., He, J., et al.: Topic model and statistical machine translation based computer assisted poetry generation. Chin. J. Comput. 38(12), 2426–2436 (2015)

Neto, J.L., Freitas, A.A., Kaestner, C.A.A.: Automatic text summarization using a machine learning approach. In: Bittencourt, G., Ramalho, G.L. (eds.) SBIA 2002. LNCS (LNAI), vol. 2507, pp. 205–215. Springer, Heidelberg (2002). https://doi.org/10.1007/3-540-36127-8_20

Zajic, D., Dorr, B.J., Lin, J., et al.: Multi-candidate reduction: Sentence compression as a tool for document summarization tasks. Inf. Process. Manag. 43(6), 1549–1570 (2007)

Wang, L., Raghavan, H., Castelli, V., et al.: A sentence compression based framework to query-focused multi-document summarization. In: Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics. Long Papers, vol. 1, pp. 1384–1394 (2013)

Filippova, K., Alfonseca, E., Colmenares, C.A., et al.: Sentence compression by deletion with LSTMs. In: Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, pp. 360–368 (2015)

Durrett, G., Berg-Kirkpatrick, T., Klein, D.: Learning-based single-document summarization with compression and anaphoricity constraints. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics. Long Papers, vol. 1, pp. 1998–2008 (2016)

Nallapati, R., Zhai, F., Zhou, B.: SummaRuNNer: a recurrent neural network based sequence model for extractive summarization of documents, pp. 3075–3081. AAAI (2017)

Chen, Q., Zhu, X., Ling, Z., et al.: Distraction-based neural networks for modeling documents. In: Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence, pp. 2754–2760. AAAI Press (2016)

Miao, Y., Blunsom, P.: Language as a latent variable: discrete generative models for sentence compression. In: Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, pp. 319–328 (2016)

Zhou, Q., Yang, N., Wei, F., et al.: Selective encoding for abstractive sentence summarization. In: Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics. Long Papers, vol. 1, pp. 1095–1104 (2017)

Paulus, R., **ong, C., Socher, R.: A deep reinforced model for abstractive summarization. ar**v preprint: ar**v:1705.04304 (2017)

See, A., Liu, P.J., Manning, C.D.: Get to the point: summarization with pointer-generator networks. In: Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics. Long Papers, vol. 1, pp. 1073–1083 (2017)

Gehrmann, S., Deng, Y., Rush, A.: Bottom-up abstractive summarization. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 4098–4109 (2018)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Cho, K., van Merrienboer, B., Gulcehre, C., et al.: Learning phrase representations using RNN encoder–decoder for statistical machine translation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1724–1734 (2014)

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate. In: ICLR (2015)

Gulcehre, C., Ahn, S., Nallapati, R., et al.: Pointing the unknown words. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics. Long Papers, vol. 1, pp. 140–149 (2016)

Gu, J., Lu, Z., Li, H., et al.: Incorporating copying mechanism in sequence-to-sequence learning. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics. Long Papers, vol. 1, pp. 1631–1640 (2016)

Paulus, R., **ong, C., Socher, R.: A deep reinforced model for abstractive summarization. In: International Conference on Learning Representations (ICLR) (2018)

Kryściński, W., Paulus, R., **ong, C., et al.: Improving abstraction in text summarization. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 1808–1817 (2018)

Chen, Y.C., Bansal, M.: Fast abstractive summarization with reinforce-selected sentence rewriting. ar**v preprint: ar**v:1805.11080 (2018)

Zhao, X., Zhang, Y., Guo, W., Yuan, X.: Jointly trained convolutional neural networks for online news emotion analysis. In: Meng, X., Li, R., Wang, K., Niu, B., Wang, X., Zhao, G. (eds.) WISA 2018. LNCS, vol. 11242, pp. 170–181. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-02934-0_16

Hermann, K.M., Kočiský, T., Grefenstette, E., et al.: Teaching machines to read and comprehend, pp. 1693–1701 (2015)

Tang, J., Zhang, J., Yao, L., et al.: ArnetMiner: extraction and mining of academic social networks. In: ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 990–998. DBLP (2008)

Blei, D.M., Ng, A.Y., Jordan, M.I.: Latent Dirichlet Allocation. J. Mach. Learn. Res. 3, 993–1022 (2003)

Acknowledgments

This work was partially supported by the following projects: Natural Science Foundation of Guangdong Province, China (Nos. 2016A030313441), research and reform project of higher education of Guangdong province (outcome-based education on data science talent cultivation model construction and innovation practice).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Pan, HX., Liu, H., Tang, Y. (2019). A Sequence-to-Sequence Text Summarization Model with Topic Based Attention Mechanism. In: Ni, W., Wang, X., Song, W., Li, Y. (eds) Web Information Systems and Applications. WISA 2019. Lecture Notes in Computer Science(), vol 11817. Springer, Cham. https://doi.org/10.1007/978-3-030-30952-7_29

Download citation

DOI: https://doi.org/10.1007/978-3-030-30952-7_29

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-30951-0

Online ISBN: 978-3-030-30952-7

eBook Packages: Computer ScienceComputer Science (R0)