Abstract

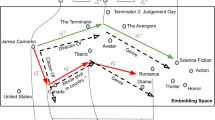

Computing knowledge graph (KG) embeddings is a technique to learn distributional representations for components of a knowledge graph while preserving structural information. The learned embeddings can be used in multiple downstream tasks such as question answering, information extraction, query expansion, semantic similarity, and information retrieval. Over the past years, multiple embedding techniques have been proposed based on different underlying assumptions. The most actively researched models are translation-based which treat relations as translation operations in a shared (or relation-specific) space. Interestingly, almost all KG embedding models treat each triple equally, regardless of the fact that the contribution of each triple to the global information content differs substantially. Many triples can be inferred from others, while some triples are the foundational (basis) statements that constitute a knowledge graph, thereby supporting other triples. Hence, in order to learn a suitable embedding model, each triple should be treated differently with respect to its information content. Here, we propose a data-driven approach to measure the information content of each triple with respect to the whole knowledge graph by using rule mining and PageRank. We show how to compute triple-specific weights to improve the performance of three KG embedding models (TransE, TransR and HolE). Link prediction tasks on two standard datasets, FB15K and WN18, show the effectiveness of our weighted KG embedding model over other more complex models. In fact, for FB15K our TransE-RW embeddings model outperforms models such as TransE, TransM, TransH, and TransR by at least 12.98% for measuring the Mean Rank and at least 1.45% for HIT@10. Our HolE-RW model also outperforms HolE and ComplEx by at least 14.3% for MRR and about 30.4% for HIT@1 on FB15K. Finally, TransR-RW show an improvement over TransR by 3.90% for Mean Rank and 0.87% for HIT@10.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

Counter-examples include KG embedding techniques such as RESCAL which also includes literals [8].

- 2.

Rule-supported Weights.

- 3.

Recall that r stands for a given relation, h for head, i.e., a triple’s subject, and t for tail, i.e., an entity in the object position.

- 4.

- 5.

[7] points out that MRR is less sensitive to outliers than Mean Rank. So we also report MRR in TransE-RW and TransR-RW.

- 6.

Note that we only implement TransR-RW on freq weight as an example.

References

Bordes, A., Usunier, N., Garcia-Duran, A., Weston, J., Yakhnenko, O.: Translating embeddings for modeling multi-relational data. In: Advances in Neural Information Processing Systems, pp. 2787–2795 (2013)

Cochez, M., Ristoski, P., Ponzetto, S.P., Paulheim, H.: Global RDF vector space embeddings. In: dAmato, C., et al. (eds.) ISWC 2017. LNCS, vol. 10587, pp. 190–207. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-68288-4_12

Fan, M., Zhou, Q., Chang, E., Zheng, T.F.: Transition-based knowledge graph embedding with relational map** properties. In: Proceedings of the 28th Pacific Asia Conference on Language, Information and Computing (2014)

Galárraga, L., Teflioudi, C., Hose, K., Suchanek, F.M.: Fast rule mining in ontological knowledge bases with amie + +. VLDB J. 24(6), 707–730 (2015)

Lin, Y., Liu, Z., Sun, M., Liu, Y., Zhu, X.: Learning entity and relation embeddings for knowledge graph completion. In: AAAI, vol. 15, pp. 2181–2187 (2015)

Mai, G., Janowicz, K., Yan, B.: Combining text embedding and knowledge graph embedding techniques for academic search engines. In: SemDeep-4 (2018)

Nickel, M., Rosasco, L., Poggio, T.A., et al.: Holographic embeddings of knowledge graphs. In: AAAI, pp. 1955–1961 (2016)

Nickel, M., Tresp, V., Kriegel, H.P.: A three-way model for collective learning on multi-relational data. In: ICML, vol. 11, pp. 809–816 (2011)

Paulheim, H.: Knowledge graph refinement: a survey of approaches and evaluation methods. Semantic Web 8(3), 489–508 (2017)

Trouillon, T., Dance, C.R., Gaussier, É., Welbl, J., Riedel, S., Bouchard, G.: Knowledge graph completion via complex tensor factorization. J. Mach. Learn. Res. 18(1), 4735–4772 (2017)

Wang, Q., Mao, Z., Wang, B., Guo, L.: Knowledge graph embedding: a survey of approaches and applications. IEEE Trans. Knowl. Data Eng. 29(12), 2724–2743 (2017)

Wang, Z., Zhang, J., Feng, J., Chen, Z.: Knowledge graph embedding by translating on hyperplanes. In: AAAI, vol. 14, pp. 1112–1119 (2014)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Mai, G., Janowicz, K., Yan, B. (2018). Support and Centrality: Learning Weights for Knowledge Graph Embedding Models. In: Faron Zucker, C., Ghidini, C., Napoli, A., Toussaint, Y. (eds) Knowledge Engineering and Knowledge Management. EKAW 2018. Lecture Notes in Computer Science(), vol 11313. Springer, Cham. https://doi.org/10.1007/978-3-030-03667-6_14

Download citation

DOI: https://doi.org/10.1007/978-3-030-03667-6_14

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-03666-9

Online ISBN: 978-3-030-03667-6

eBook Packages: Computer ScienceComputer Science (R0)