Abstract

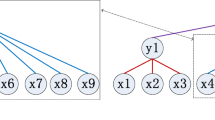

The convolution neural networks (CNNs) can extract the rich feature of the image. It was widely used in the field of computer vision (CV) and made great breakthroughs. However, most of the existing CNNs models only utilize the features out put by last layer, the representation of features is not comprehensive enough. In this paper, we propose a multilevel features fusion method, in order to make full use of the intermediate layer features. This method can strengthen feature propagation and improve the accuracy of downstream tasks. We evaluate our method through experiments on two image classification benchmark tasks: CIFAR-10 and CIFAR-100. The experimental results show that our method is able to significantly improve the accuracy of VGG-like model. The improved model is better than most existing models.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Zeiler, M.D., Fergus, R.: Visualizing and understanding convolutional networks. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8689, pp. 818–833. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10590-1_53

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. In: Advances in Neural Information Processing Systems, pp. 1097–1105 (2012)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Deng, J., et al.: ImageNet: a large-scale hierarchical image database. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255 (2009)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. ar**v preprint ar**v:1409.1556 (2014)

Szegedy, C., Liu, W., Jia, Y., Sermanet, P., Reed, S., Anguelov, D., et al.: Going deeper with convolutions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9 (2015)

Yosinski, J., Clune, J., Nguyen, A., Fuchs, T., Lipson, H.: Understanding neural networks through deep visualization. ar**v preprint ar**v:1506.06579 (2015)

Krizhevsky, A., Hinton, G.: Learning multiple layers of features from tiny images (2009)

Abadi, M., et al.: Tensorflow: large-scale machine learning on heterogeneous distributed systems. ar**v preprint ar**v:1603.04467 (2016)

Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., Salakhutdinov, R.: Dropout: a simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 15, 1929–1958 (2014)

Ioffe, S., Szegedy, C.: Batch normalization: accelerating deep network training by reducing internal covariate shift. In: Proceedings of the International Conference on Machine Learning, pp. 448–456 (2015)

Nair, V., Hinton, G.E.: Rectified linear units improve restricted boltzmann machines. In: Proceedings of the International Conference on Machine Learning, pp. 807–814 (2010)

He, K., Zhang, X., Ren, S., Sun, J.: Delving deep into rectifiers: surpassing human-level performance on imagenet classification. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1026–1034 (2015)

Clevert, D.A., Unterthiner, T., Hochreiter, S.: Fast and accurate deep network learning by exponential linear units (elus). ar**v preprint ar**v:1511.07289 (2015)

Glorot, X., Bengio, Y.: Understanding the difficulty of training deep feedforward neural networks. In: Proceedings of the International Conference on Artificial Intelligence and Statistics, pp. 249–256 (2010)

Srivastava, R.K., Greff, K., Schmidhuber, J.: Highway networks. ar**v preprint ar**v:1505.00387 (2015)

Huang, G., Liu, Z., van der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4700–4708 (2017)

Huang, G., Sun, Yu., Liu, Z., Sedra, D., Weinberger, Kilian Q.: Deep networks with stochastic depth. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9908, pp. 646–661. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46493-0_39

He, K., Zhang, X., Ren, S., Sun, J.: Identity map**s in deep residual networks. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9908, pp. 630–645. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46493-0_38

Lee, C.Y., **e, S., Gallagher, P., Zhang, Z., Tu, Z.: Deeply-supervised nets. ar**v preprint ar**v:1409.5185 (2015)

LeCun, Y., Bottou, L., Bengio, Y., Haffner, P.: Gradient-based learning applied to document recognition. Proc. IEEE 86(11), 2278–2323 (1998)

Goodfellow, I.J., Warde-Farley, D., Mirza, M., Courville, A., Bengio, Y.: Maxout networks. ar**v preprint ar**v:1302.4389 (2013)

Lin, M., Chen, Q., Yan, S.: Network in network. ar**v preprint ar**v:1312.4400 (2013)

Larsson, G., Maire, M., Shakhnarovich, G.: Fractalnet: ultra-deep neural networks without residuals. ar**v preprint ar**v:1605.07648 (2016)

Romero, A., et al.: Hints for thin deep nets. ar**v preprint ar**v:1412.6550 (2014)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Zhuo, YF., Wang, YL. (2018). Multilevel Features Fusion in Deep Convolutional Neural Networks. In: Sun, X., Pan, Z., Bertino, E. (eds) Cloud Computing and Security. ICCCS 2018. Lecture Notes in Computer Science(), vol 11068. Springer, Cham. https://doi.org/10.1007/978-3-030-00021-9_53

Download citation

DOI: https://doi.org/10.1007/978-3-030-00021-9_53

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-00020-2

Online ISBN: 978-3-030-00021-9

eBook Packages: Computer ScienceComputer Science (R0)