Abstract

In this paper we develop a methodology, based on Mutual Information and Transfer of Entropy, that allows to identify, quantify and map on a network the synchronization and anticipation relationships between financial traders. We apply this methodology to a dataset containing \(410\text{,}612\) real buy and sell operations, made by 566 non-professional investors from a private investment firm on 8 different assets from the Spanish IBEX market during a period of time from 2000 to 2008. These networks present a peculiar topology significantly different from the random networks. We seek alternative features based on human behavior that might explain part of those \(12\text{,}158\) synchronization links and 1031 anticipation links. Thus, we detect that daily synchronization with price (present in 64.90% of investors) and the one-day delay with respect to price (present in 4.38% of investors) play a significant role in the network structure. We find that individuals reaction to daily price changes explains around 20% of the links in the Synchronization Network, and has significant effects on the Anticipation Network. Finally, we show how using these networks we substantially improve the prediction accuracy when Random Forest models are used to nowcast and predict the activity of individual investors.

Similar content being viewed by others

1 Introduction

Human collective behavior has been increasingly studied due to an unprecedented amount of data available from the digital world [1]. A new research topic has been thus opened to an extensive use of multidisciplinary strategies, that are aimed to dive into the empirics by using a wide variety of styles and techniques. Approaches in the literature to find dynamical patterns in data or even address fundamental research questions are today rich and diverse. Still, one of the most intriguing aspects that needs further understanding is the non-trivial relationship between individual actions and the aggregated bulk of actions of large collectivities [2].

Rather evident contexts where it is possible to study the phenomena are social networks. It is possible to observe coordination effects, amplifying for instance the impact of a street protest in a microblogging platform such as Twitter [3]. The links through which information flows can bring out macroscopic emergent patterns. However, other situations differ from this perspective, and then allow us to neatly focus on how the macroscopic signal leads to individual actions simply because there is no direct communication among the individuals. This can also be considered the case of our dataset containing clients’ activity from a trading firm whose orders have no significant impact in asset price evolution.

Within the study of collective behavior in financial markets, there are several lines of research [4, 5]: from computational agent-based models aiming to better understand phenomena such as herding behavior [6,7,8,9] to pure empirical analysis on investor’s activity [10] or eventually through data-driven models [11]. Some of these studies focus on the bursty trading activity data [12, 13], and the impact of external information flows on price and then provide new indicators to measure the degree to which a particular news item attracts attention from investors [14]. Price shifts due to trading activity and order book imbalances are being studied observing universal patterns that link macroscopic price formation and individual market and limit orders placed in the order book [15, 16]. Tick-by-tick trading activity indeed describes a multifractal behavior explained by a highly heterogeneous nature of executed tasks, mostly due to the large diversity of investor’s profiles [12, 13]. The marked peaks of trading activity and the clusters with very intense activity emerging between calm periods are also observed to be linked with the bursty evolution of market volatility [17, 18] which is a very relevant indicator in traders decisions mechanisms. The investor’s behavior is also behind the interpretation of the non-trivial market phenomena, such as the leverage effect where daily price drops increase volatility of the following few days [19, 20].

The non-stationary nature of the financial series, together with the fact that investors are heterogeneous, meaning for instance that they operate at different volume scales and time-horizons, asks for a careful analysis and the application of the most adequate techniques. It is precisely under this context where non-parametric statistics deploys all its powerful methods. Thus, our analysis is mostly grounded on Mutual Information [21] and Symbolic Transfer of Entropy [22] (STE), which allows to quantitatively study individual behavioral aspects, like synchronization and information flows, key elements to identify higher properties like structural hubs, coordinated communities, critical transitions or sudden collapses [23]. STE analysis is in fact a rather new tool in the context of financial markets, which has mostly being used to analyze cross-market effects [24, 25] and to identify dynamic causal linkages as a way to complement other techniques such as network analysis [26, 27], which might have important consequences in optimizing portfolio composition. In this sense, Mutual Information and mostly STE respectively represent an alternative approach to statistically validated synchronous networks [28] and its much more recent evolution under the form of statistically validated lead-lag networks [29, 34]. A subsequent study by the same author [35] was also analyzing return patterns and investor’s purchases finding that overall trading for a particular group of investors is excessive. In the 2000’s Grinblatt and Keloharju took advantage of transparent Finnish stock market, where traders’ IDs are recorded in every transaction, and used a database of this market to analyze the performance of different types of traders, categorized as pro-momentum or contrarians in a first study [36]; and sensation seekers or overconfident traders subsequently [37]. Other efforts [11] were made with clients database from one of the greatest on-line Swiss broker which found empirical relationships between turnovers (contrarian strategies) account values and number of assets in which a trader is investing.

Tumminello et al. [31] made a first attempt in 2012 to identify clusters of investors in the Finnish market with statistically validated synchronous networks [28] and this effort has also served to go deeper in trading profiles identification [38]. More recently same methods have been applied to the clusters of investors with similar trading profiles in a robust and reliable way understand their long-term ecology based on what Musciotto et al. call adaptive market hypothesis [33] or even to study systemic risk [32]. Other recent works explore the possibility to find trading similarities of Swedish investors with similar portfolios [39], while Lillo et al. [40] have also investigated how news (an exogenous signal) affect the trading behavior of different categories of investors or even how different. Pairwise synchronization between traders’ activity is been used to detect communities and define groups of traders. Recently, Challet et al. [29] infer lead-lag networks to predict the sign of the order flow and the volume weighted average price of broker clients over the next hour. And even more recently Cordi et al. [\(A_{ij}\). (Top-center) Considering only values within the overlap** period we codify the position time series into symbols, using in this particular case with embedding dimension \(m=2\), to generate symbolic time series X and Y. (Top-right) We use these symbolic time series to compute the values for Mutual Information \(I_{ij}\) and Transfer of Entropy \(T_{ij}\). In parallel, we apply a bootstrap** process to X and Y to extract a distribution of null values \(I_{ij}^{\ast }\) and \(T_{ij}^{\ast }\), which we use to apply the FDR procedure explained in “Methods”. Then, we keep all values within the 95% Confidence Interval, manually setting the rest to 0 for the non-significant, to create the adjacency lists for Synchronization Network (Center-right) and Anticipation Network (Bottom-right) for REP market. The networks are generated considering investors as nodes and edge weight as the corresponding values of \(I_{ij}\) and \(T_{ij}\) respectively. Size of the nodes is proportional to the node degree for Synchronization network and to the out-degree for the Anticipation network. Numbers inside each node are used as an ID of the investor

2.1 Data

Most of the markets do not allow traders to directly access the market and their orders are placed through trading firms. Our raw database reports trading activity of \(29\text{,}930\) clients, who are small size non-professional traders that invest their own savings. All their decisions are genuinely human and not taken by any kind of algorithmic trading robot, although it is possible that some of them are influenced by some external factors. Investors traded over 120 different assets in the BME Spanish stock exchange from 01/01/2000 until 12/31/2008 (1969 trading days). This period does not present a general global trend, although the initial range (2000–2002) has been qualified by experts as a bearish period (that is: Spanish market prices had an overall negative trend during that period); while the subsequent one (2003–2008) has been considered bullish (that is: Spanish market prices had an overall positive trend during that period). In total, the dataset contains \(3\text{,}303\text{,}695\) transactions, where each record includes the ID of the client, the ID of the investment firm’s associated manager, the date of the transaction, the price of the asset, and the number of shares being sold or bought. Our database contrasts with previous studies from Finnish market where records compiled trading activity of all actors in the trading floor (including households and financial corporations) [33, 38, 40]. Our database keeps some similarity with the one being used in Refs. [11] and [31].

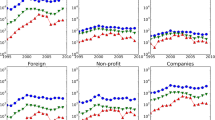

Since our interest is to map a collectivity of investors based on their individual performance, our database needs to fulfill two general criteria: (i) bearing enough data from each investor, so that behavior at both individual and aggregate levels can be studied. Therefore, a first filter consists on limiting the analysis to those investors for whom their daily position balance (number of shares bought minus number shares sold) is different from zero for at least 20 days. And (ii), for those investors passing the first restriction, we consider only transactions over the 8 most traded assets, that contain at least 30 investors after filtering. Those assets are very heterogeneous regarding their number of transactions, but also in terms of the business sector of the companies. In decreasing order, these are: Telefonica (TEF), from communications sector, with 415 most active investors accounting for \(131\text{,}518\) transactions; Santander (SAN), from finance sector, with 219 most active investors accounting for \(71\text{,}463\) transactions; BBVA (BBVA), from finance sector, with 113 most active investors accounting for \(53\text{,}388\) transactions; Endesa (ELE), from utilities sector, with 86 most active investors accounting for \(45\text{,}468\) transactions; Ezentis (EZE), from industrial sector, with 88 most active investors accounting for \(31\text{,}207\) transactions; Zeltia (ZEL), from health care sector, with 71 active investors accounting for \(19\text{,}021\) transactions; Repsol (REP), from energy sector, with 62 active investors accounting for \(36\text{,}354\) transactions; Gas Natural (GAS), from utilities sector, with 30 active investors accounting for \(22\text{,}193\) transactions.

In summary, and considering that a single investor can trade with different assets, we have analyzed the performance of up to 566 different individuals accounting for \(410\text{,}612\) transactions. While these quantities are large enough to study a community of investors, it is nonetheless impossible that this collectivity has any impact on market price of any of specified assets, specially considering the daily total volume traded for any of them in the Spanish stock market. Consequently, price signal should never be considered as something endogenous or generated by these communities of investors.

The filtered dataset is publicly accessible as described in “Availability of data and materials” section.

2.2 Performance time series and activity periods

Comparing the behavior and performance between two investors only makes sense when they hold or are trading with the same asset. Therefore, in our analysis we are going to treat each of the asset managements as a different and separated scenario. Thus, for each asset we define \(N_{i}(t)>0\) as the total number of shares that investor i is holding at the end of day t. Equivalently, \(\Delta N_{i}(t) \equiv N_{i}(t) - N_{i}(t-1)\) is the daily cumulative change in her position, size of assets bought minus size of assets sold, by that particular investor during the day t. Thus, if \(\Delta N_{i}(t)>0\), her trading volume is dominated by buying orders; selling orders are predominant if \(\Delta N_{i}(t)<0\). Also, note that \(\Delta N_{i}(t)= 0\) does not imply necessarily that investor i hasn’t traded in the day t: it might well be that she has behaved like an intra-day trader holding the same number of shares at the beginning and at the end of the day. Alternatively, since our data resolution is at daily level, the information is more relevant when \(\Delta N_{i}(t)\neq 0\) because it implies not only that individual i has been active but also that her decisions incorporate a specific market daily orientation.

Once \(N_{i}(t)>0\) is determined for every investor, her activity period \(A_{i}\) can also be defined as the time period from its first until last recorded transaction. When comparing two different investors i and j, respectively with activity periods \(A_{i}\) and \(A_{j}\), we constrain the analysis to the overlap** period between both investors \(A_{ij}\). To avoid any confusion in further discussions, we here want to point out that the activity joint period \(A_{ij}\) is not an element of a matrix. In the case that the overlap** period is smaller than 20 days (\(A_{ij}>20\)), the pair of investors is considered as non-contemporary and measures for synchronization and anticipation are not computed for this specific pair of investors. In order to ensure a sample size big enough, we also disregard the pair of investors if within the overlap** time period any of the two investors does not show activity, i.e. the position does not change, for at least 20 days.

2.3 Symbolization, mutual information and transfer of entropy

Considering the nature of the time series \(N_{i}(t)>0\), we cannot assume that these are linear nor stationary. Moreover, the strong disparity in their nature invites us not to use any kind of analysis grounded on linear assumptions [41], and choose instead more sophisticated tools [42, 43] which can handle implicit non-linear dynamics. In this context, symbolization seems appropriate when it comes to compare agents’ behavior, regardless of their capital or typical transactions size. We thus adopt the framework of Bandt and Pompe [44] to symbolize the investor’s position \(N_{i}(t)>0\) in order to compute Symbolic Mutual Information (SMI) and Symbolic Transfer of Entropy (STE) [22] between investors later on. We also introduce a new important feature in the symbolization process due to the nature of our time series: here we consider an additional symbol representing unchanging values in \(N_{i}(t)\), that is when \(\Delta N _{i}(t)= 0\). In their work, Bandt and Pompe neglected unchanging values in the series, because their fluctuations were generated by a continuous distribution. That is, the probability to observe a chain of constant values was negligible. However, in the present case the situation is quite the opposite, where \(N_{i}(t)=N_{i}(t+1)\) is a common situation (see Figure S1).

In order to preserve the original nomenclature, we redefine the original daily time series for two investors i and j as

being t within the overlap** activity period \(A_{ij}\), and sub-indices 0 and n representing the first day and last day of this period, respectively. From here, we can transform these numerical time series into a series of symbols that depend on sub-pieces of consecutive numerical values. The length of these pieces is given by the embedding dimension m, which in turn defines the number of possible symbols (see Fig. 2). We can thus read

where hat represents the fact that series are now codified in symbols, instead of the original numbers. Now, according to the definition of Shannon [21], we compute Symbolic Mutual Information (SMI) as

where the sum is over all symbols, \(p(\hat{x}_{t},\hat{y}_{t})\) is the joint probability that two specific symbols appear together and \(p(\hat{x}_{t})\) and \(p(\hat{y}_{t})\) are the marginal probabilities. If both series X̂ and Ŷ are independent, then \(I(\hat{X},\hat{Y})=0\) which means that both investors i and j performances are unrelated. Instead, if i and j are completely synchronized, \(\hat{x}_{t}=\hat{y}_{t}\) (∀t), \(I(\hat{X}, \hat{Y})\) will take the maximum value which depends on the number of symbols and the embedding dimension m.

Similarly, Symbolic Transfer of Entropy (STE) [22] between investors i and j can also be computed. Thus, STE from Ŷ (investor j) to X̂ (investor i) reads

The sum is again over all symbols, and now both joint probability \(p(\hat{x}_{k+1},\hat{x}_{k},\hat{y}_{k})\) and conditional probabilities \(p(\hat{x}_{k+1}|\hat{x}_{k})\) and \(p(\hat{x}_{k+1}|\hat{x}_{k}, \hat{y}_{k})\), include a third element that considers certain time delay by shifting events one-day ahead. Thus, in order to assess the direction of the entropy transfer flow we need to calculate

where a positive value means that Ŷ (investor j) is anticipating with respect to X̂ (investor i), and the opposite for negative values. It is important to remark that here we are using the concept of Transfer of Entropy as a tool to reveal information flows and predictive power between variables. Since we do not have any access to the complete context and circumstances of all investors, we cannot therefore use it to establish any causal relationship between them [45, 46].

Finally, we need to determine the embedding dimension m, i.e. the number of consecutive daily records considered to generate all possible symbols. In this work we initially tested both \(m=2\) and \(m=3\), generating time series with 3 and 13 symbols respectively. However, given the daily nature of our time series, the results for \(m=3\) were very noisy and the networks barely had any significant link. Notwithstanding, \(m>2\) could still be useful when applied to a longer time series or with a higher frequency because could lead to a more refined study. Hence, we report here results for \(m=2\), what leads to encode the time series for the position using three different kind of symbols: positive change in position \(N_{i}(t)>0\) (↑), negative change in position \(N_{i}(t)<0\) down (↓) or null change in position \(N_{i}(t)=0\) or price (−). Note that for the specific case of \(m=2\) we generate networks very similar than co-ocurrence networks [28, 31] or lead-lag networks [29]. However, there is an important difference between lead-lag and the anticipation networks based on Transfer of Entropy. The former ones build a co-ocurrence network over a pair of time series where one is lagged with respect to the other, whereas the Transfer of Entropy considers not only the lag with respect the second time series but also the lag of the first one (see the conditional probabilities in Eq. (4)). This allows to measure the neat flow of information between two time series.

2.4 Bootstrap** and network construction

Once \(I_{ij}\) and \(T_{ij}\) have been calculated for each pair of investors we carry out a bootstrap** process of \(10\text{,}000\) iterations in order to establish the significance level of each link. In each of those iterations we shuffle the symbolized series of the investors position in the overlap** period \(A_{ij}\), X̂ and Ŷ, and subsequently compute the corresponding value for the Mutual Information and Transfer of Entropy, \(I_{ij}^{\ast }\) and \(T_{ij}^{\ast }\) respectively. We determine the significance of the original values for \(I_{ij}\) and \(T_{ij}\) based on such distribution. Since this implies multiple hypothesis testing, we must control the false positive rate and adjust the p-values accordingly. Here we use the FDR controlling procedure called Benjamini–Hochberg (from here codenamed as “FDR”) and FWER controlling procedure called Bonferroni correction (from here codenamed as “Bonferroni”). These two procedures are very standard and also used in similar cases through statistically validated networks in [31] and [29].

Bonferroni correction modifies the original significance threshold \(\alpha =0.05\), setting it to \(\alpha / m\), where m is the number of independent test performed, which in our case is the number of pairs of investors with an overlap** time-window \(A_{ij}\) that fulfills the conditions defined above. We then sort the distribution of the shuffled values for \(I_{ij}^{\ast }\) and \(T_{ij}^{\ast }\) and set the significance thresholds given by “Bonferroni”, one-sided for the case of Mutual Information and two-sided for Transfer of Entropy. If the original value is outside those intervals we keep it otherwise we manually set it to 0.

Whereas Bonferroni correction can be very conservative, other criteria such as Benjamini–Hochberg, can still control the false positive rate but in a less strict way that allows for more true positives. This method is based on sorting the p-values for the Mutual Information and Transfer of Entropy, and then consider significant all the p-values smaller than the largest p-value fulfilling

where \(p_{(k)}\) is the kth p-value, m the number of investors pairs, and \(\alpha =0.05\) the original significance threshold. This method requires first to compute the actual p-values. Such task is not trivial since the proportion of the symbols in the underlying series might modify the mean and variance of the distribution of the null values, as we demonstrate in the Figure S2 of Additional file 1. The strategy we follow here consist on computing the mean and standard deviation of the shuffled values. We then determine the p-value of the original \(I_{ij}\) from a gamma distribution [47] and the p-value of the original \(T_{ij}\) from a normal distribution. In all cases, for each pair of investors we parametrize those functions with the mean and standard deviation of the distribution for \(I_{ij}^{\ast }\) and \(T_{ij}^{\ast }\) respectively.

Finally, adjacency matrices are built from \(I_{ij}\) and \(T_{ij}\) quantities to generate Synchronization and Anticipation networks as Fig. 1 shows. In the first case we obtain a weighted undirected network whose nodes represent investors and edges how synchronized they are. In the second, we obtain a weighted directed network whose nodes represent investors, and arrows indicate who anticipates whom.