Abstract

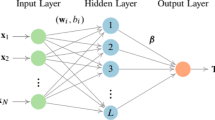

The aim of this study is to present a new regularized extreme learning machine (ELM) algorithm that can perform variable selection based on the simultaneous use of both ridge and Liu regressions in order to cope with some disadvantages of ELM and its variants such as instability and poor generalization performance and lack of sparsity. The proposed algorithm was compared with the classical ELM as well as the variants based on ridge, Liu, Lasso and Elastic Net approaches by cross-validation process and best tuning parameter over seven different real-world applications and their performances were presented comparatively. The proposed algorithm outperformed ridge, Lasso and Elastic Net algorithms in training performance prediction (average 40%) and stability (average 80%) and in test performance prediction (average 20%) and stability (60%) in the majority of the data. In addition, the proposed ELM was found to be more compact (better sparsity capability) with lower norm values. The results confirmed that the proposed ELM presents more stable and sparse solutions with better generalization performance than any other algorithm under favorable conditions. The findings based on experimental study via real-world applications indicate that the proposed ELM provides effective solutions to the mentioned drawbacks and yields more stable and sparse performance with better generalization capability than its competitors. Consequently, the proposed algorithm represents a powerful alternative both regression and classification tasks in machine learning field due to its theoretical flexibility.

Similar content being viewed by others

Data Availability

The datasets generated during and/or analyzed during the current study are available in the UCI repository (https://archive-beta.ics.uci.edu/).

References

Huang GB, Zhu QY, Siew CK. Extreme learning machine: a new learning scheme of feedforward neural networks. In: 2004 IEEE International Joint Conference on Neural Networks (IEEE Cat. No. 04CH37541) (Vol. 2). IEEE; 2004. p. 985–90.

Huang GB, Zhu QY, Siew CK. Extreme learning machine: Theory and applications. Neurocomputing. 2006;70(1–3):489–501.

Huang GB, Zhou H, Ding X, Zhang R. Extreme learning machine for regression and multiclass classification. IEEE Trans Syst Man Cybernet B (Cybernet). 2011;42(2):513–29.

Hoerl AE, Kennard RW. Ridge regression: Applications to nonorthogonal problems. Technometrics. 1970;12(1):69–82.

Deng W, Zheng Q, Chen L. Regularized extreme learning machine. In: 2009 IEEE Symposium on Computational Intelligence and Data Mining. IEEE; 2009. p. 389–95

Li G, Niu P. An enhanced extreme learning machine based on ridge regression for regression. Neural Comput Appl. 2013;22:803–10.

Huang WB, Sun FC. Building feature space of extreme learning machine with sparse denoising stacked-autoencoder. Neurocomputing. 2016;22(174):60–71.

Shao Z, Er MJ. Efficient leave-one-out cross-validation-basedregularized extreme learning machine. Neurocomputing. 2016;19(194):260–70.

Chen YY, Wang ZB. Novel variable selection method based on uninformative variable elimination and ridge extreme learning machine: CO gas concentration retrieval trial. Guang pu xue yu guang pu fen xi= Guang pu. 2017;37(1):299–305.

Yu Q, Miche Y, Eirola E, Van Heeswijk M, Séverin E, Lendasse A. Regularized extreme learning machine for regression with missing data. Neurocomputing. 2013;15(102):45–51.

Wang H, Li G. Extreme learning machine Cox model for high-dimensional survival analysis. Stat Med. 2019;38(12):2139–56.

Yildirim H, Özkale MR. The performance of ELM based ridge regression via the regularization parameters. Expert Syst Appl. 2019;15(134):225–33.

Luo X, Chang X, Ban X. Regression and classification using extreme learning machine based on L1-norm and L2-norm. Neurocomputing. 2016;22(174):179–86.

Kejian L. A new class of blased estimate in linear regression. Commun Stat Theor Methods. 1993;22(2):393–402.

Yıldırım H, Özkale MR. An enhanced extreme learning machine based on Liu regression. Neural Process Lett. 2020;52:421–42.

Tibshirani R. Regression shrinkage and selection via the lasso. J R Stat Soc Ser B Stat Methodol. 1996;58(1):267–88.

Miche Y, Sorjamaa A, Bas P, Simula O, Jutten C, Lendasse A. OP-ELM: Optimally pruned extreme learning machine. IEEE Trans Neural Netw. 2009;21(1):158–62.

Miche Y, Van Heeswijk M, Bas P, Simula O, Lendasse A. TROP-ELM: a double-regularized ELM using LARS and Tikhonov regularization. Neurocomputing. 2011;74(16):2413–21.

Martínez-Martínez JM, Escandell-Montero P, Soria-Olivas E, Martín-Guerrero JD, Magdalena-Benedito R, Gómez-Sanchis J. Regularized extreme learning machine for regression problems. Neurocomputing. 2011;74(17):3716–21.

Shan P, Zhao Y, Sha X, Wang Q, Lv X, Peng S, Ying Y. Interval lasso regression based extreme learning machine for nonlinear multivariate calibration of near infrared spectroscopic datasets. Anal Methods. 2018;10(25):3011–22.

Li R, Wang X, Lei L, Song Y. \( L_ 21 \)-norm based loss function and regularization extreme learning machine. IEEE Access. 2018;18(7):6575–86.

Preeti, Bala R, Dagar A, Singh RP. A novel online sequential extreme learning machine with L 2, 1-norm regularization for prediction problems. Appl Intell. 2021;51:1669–89.

Zou H, Hastie T. Regularization and variable selection via the elastic net. J R Stat Soc Ser B Stat Methodol. 2005;67(2):301–20.

Yıldırım H, Özkale MR. LL-ELM: a regularized extreme learning machine based on L 1-norm and Liu estimator. Neural Comput Appl. 2021;33(16):10469–84.

Rao CR, Mitra SK. Generalized inverse of a matrix and itsapplications. In: Proceedings of the Sixth Berkeley Symposium on Mathematical Statistics and Probability, Volume 1: Theory of Statistics (Vol. 6). University of California Press; 1972. p. 601–21.

Schott JR. Matrix analysis for statistics. John Wiley & Sons; 2016.

Tutz G, Binder H. Boosting ridge regression. Comput Stat Data Anal. 2007;51(12):6044–59.

Yıldırım H, Özkale MR. A combination of ridge and Liu regressions for extreme learning machine. Soft Comput. 2023;27(5):2493–508.

Sjöstrand K, Clemmensen LH, Larsen R, Einarsson G, Ersbøll B. Spasm: a Matlab toolbox for sparse statistical modeling. J Stat Softw. 2018;23(84):1–37.

Efron B, Hastie T, Johnstone I, Tibshirani R. Least angle regression. Ann Stat. 2004;32(2):407–99.

Rosset S, Zhu J. Piecewise linear regularized solution paths. Ann Stat. 2007;1:1012–30.

Zhou DX. On grou** effect of elastic net. Stat Probab Lett. 2013;83(9):2108–12.

Author information

Authors and Affiliations

Contributions

Hasan Yıldırım: methodology, investigation, software, data curation, formal analysis, visualization, writing — original draft. M. Revan Özkale: methodology, conceptualization, supervision, writing — review editing, validation.

Corresponding author

Ethics declarations

Competing Interest

The authors declare no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Yıldırım, H., Özkale, M.R. A Novel Regularized Extreme Learning Machine Based on \(L_{1}\)-Norm and \(L_{2}\)-Norm: a Sparsity Solution Alternative to Lasso and Elastic Net. Cogn Comput 16, 641–653 (2024). https://doi.org/10.1007/s12559-023-10220-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12559-023-10220-w