Abstract

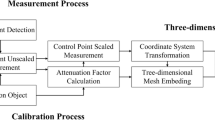

Underwater target three-dimensional detection is crucial for effectively recognizing and acquiring target information in complex water rings. The underwater robotic operating system as a conventional underwater operating platform, generally equipped with a binocular or monocular camera, how to utilize the underwater monocular camera with high precision and high efficiency to complete the target three-dimensional information acquisition is the main research starting point of this paper. To this end, this paper proposes a laser-assisted three-dimensional depth monocular detection method for underwater targets, which utilizes three cross lasers to assist the monocular camera system in capturing the depth data at different positions of the target plane at one time. The image correction by the four-point laser calibration method in this paper solves the difficulties of image distortion caused by an unstable underwater environment and lens effect, as well as laser angle deviation caused by the tilting of the underwater robot. The instability of the underwater environment and the lens can cause image distortion, and the tilt of the underwater robot causes the laser angle to deviate. After correcting the image, the depth data between the target and the robot can be calculated based on the geometric relationship that exists between the imaginary rectangle formed by the laser dots and laser lines in the image and the imaginary rectangle formed between the lasers on the device. This method uses a single image to obtain target depth information and is capable of measuring not only horizontal planes but also multiplanes and inclined planes. Experiments show that the algorithm improves the performance accuracy in underwater environments and land environments compared to traditional methods, and obtains depth information for the entire plane at once. The method provides a theoretical and practical basis for underwater monocular 3D information acquisition.

Similar content being viewed by others

Data availability

Data available on request from the authors.

References

Yoerger DR, Jakuba M, Bradley AM, Bingham B (2007) Techniques for deep sea near bottom survey using an autonomous underwater vehicle. Int J Robot Res 26(1):41–54

Wu Y, Ta X, **ao R, Wei Y, An D, Li D (2019) Survey of underwater robot positioning navigation. Appl Ocean Res 90:101845

Faugeras OD, Luong QT, Maybank SJ (1992) Camera self-calibration: Theory and experiments. In Computer Vision—ECCV'92: Second European Conference on Computer Vision Santa Margherita Ligure, Italy, May 19–22, 1992 Proceedings 2 (321–334). Springer Berlin Heidelberg

Tsai DM, Chiang CH (2002) Rotation-invariant pattern matching using wavelet decomposition. Pattern Recogn Lett 23(1–3):191–201

Zhang Z (2004) Camera calibration with one-dimensional objects. IEEE Trans Pattern Anal Mach Intell 26(7):892–899

McIvor AM (2002) Nonlinear calibration of a laser stripe profiler. Opt Eng 41(1):205–212

Reid ID (1996) Projective calibration of a laser-stripe range finder. Image Vis Comput 14(9):659–666

Tiddeman B, Duffy N, Rabey G, Lokier J (1998) Laser-video scanner calibration without the use of a frame store. IEE Proc-Vision Image Signal Process 145(4):244–248

Reshetyuk Y (2010) A unified approach to self-calibration of terrestrial laser scanners. ISPRS J Photogramm Remote Sens 65(5):445–456

Gassner G, Ruland R (2008) Laser tracker calibration-testing the angle measurement system (No. SLAC-PUB-13476). SLAC National Accelerator Lab., Menlo Park, CA (United States)

Metoyer S, Bogucki D (2021) Underwater laser imaging. Polish Hyperbaric Res 77(4):39–52

Kun L, Su-Hui Y, Ying-Qi L, Xue-Tong L, **n W, **-Ying Z, Zhuo L (2021) Underwater ranging with intensity modulated 532 nm laser source. Acta Physica Sinica 70(8)

Li S, Yang X (2017) The research of binocular vision ranging system based on LabVIEW. In AIP Conference Proceedings 1890(1) 040056. AIP Publishing LLC

Sun X, Jiang Y, Ji Y, Fu W, Yan S, Chen Q., ... Gan X (2019) Distance measurement system based on binocular stereo vision. In IOP Confer Ser: Earth and Environmental Science 252(5) 052051 IOP Publishing

Wang Q, Zhang Y, Shi W, Nie M (2020) Laser ranging-assisted binocular visual sensor tracking system. Sensors 20(3):688

Fang, Z., Lin, T., Li, Z., Yao, Y., Zhang, C., Ma, R., ... & Ren, H. (2022). Automatic Walking Method of Construction Machinery Based on Binocular Camera Environment Perception. Micromachines 13(5) 671

Guo S, Chen S, Liu F, Ye X, Yang H (2017) Binocular vision-based underwater ranging methods. In 2017 IEEE International Conference on Mechatronics and Automation (ICMA) 1058–1063 IEEE

Huo G, Wu Z, Li J, Li S (2018) Underwater target detection and 3D reconstruction system based on binocular vision. Sensors 18(10):3570

Wu X, Tang X (2019) Accurate binocular stereo underwater measurement method. Int J Adv Rob Syst 16(5):1729881419864468

He L, Yang J, Kong B, Wang C (2017) An automatic measurement method for absolute depth of objects in two monocular images based on SIFT feature. Appl Sci 7(6):517

Jiafa M, Wei H, Weiguo S (2020) Target distance measurement method using monocular vision. IET Image Proc 14(13):3181–3187

Yuan F, He J (2020) Human height measurement in surveillance video based on vision technology. Int Core J Eng 6(5):198–208

Huang L, Wu G, Tang W, Wu Y (2021) Obstacle distance measurement under varying illumination conditions based on monocular vision using a cable inspection robot. IEEE Access 9:55955–55973

Xue L, Li M, Fan L, Sun A, Gao T (2021) Monocular Vision Ranging and Camera Focal Length Calibration. Sci Program 2021:1–15

Lang J, Mao J, Liang R (2022) Non-horizontal target measurement method based on monocular vision. Syst Sci Control Eng 10(1):443–458

Wu G, Zeng L (2007) Video tracking method for three-dimensional measurement of a free-swimming fish. Sci China Ser G 50(6):779–786

Hemelrijk CK, Hildenbrandt H, Reinders J, Stamhuis EJ (2010) Emergence of oblong school shape: models and empirical data of fish. Ethology 116(11):1099–1112

Mao J, **ao G, Sheng W, Qu Z, Liu Y (2016) Research on realizing the 3D occlusion tracking location method of fish’s school target. Neurocomputing 214:61–79

Chi S, **e Z, Chen W (2016) A laser line auto-scanning system for underwater 3D reconstruction. Sensors 16(9):1534

Xue Q, Sun Q, Wang F, Bai H, Yang B, Li Q (2021) Underwater high-precision 3D reconstruction system based on rotating scanning. Sensors 21(4):1402

Singh D, Kaur M, Jabarulla MY, Kumar V, Lee HN (2022) Evolving fusion-based visibility restoration model for hazy remote sensing images using dynamic differential evolution. IEEE Trans Geosci Remote Sens 60:1–14

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Natural Science Foundation of Shanghai (No.14ZR1414900) for providing financial support for this work.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Tang, Z., Xu, C. & Yan, S. A laser-assisted depth detection method for underwater monocular vision. Multimed Tools Appl 83, 64683–64716 (2024). https://doi.org/10.1007/s11042-024-18167-2

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-024-18167-2