Abstract

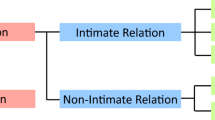

We introduce a new dataset and method for Dyadic Human relation Recognition (DHR). DHR is a new task that concerns the recognition of the type (i.e., verb) and roles of a two-person interaction. Unlike past human action detection, our goal is to extract richer information regarding the roles of actors, i.e., which subjective person is acting on which objective person. For this, we introduce the DHR-WebImages dataset which consists of a total of 22,046 images of 51 verb classes of DHR with per-image annotation of the verb and role, and also a test set for evaluating generalization capabilities, which we refer to as DHR-Generalization. We tackle DHR by introducing a novel network inspired by the hierarchical nature of cognitive human perception. At the core of the network lies a “structure-aware attention” module that weights and integrates various hierarchical visual cues associated with the DHR instance in the image. The feature hierarchy consists of three levels, namely the union, human, and joint levels, each of which extracts visual features relevant to the participants while modeling their cross-talk. We refer to this network as Structure-aware Attention Network (SAN). Experimental results show that SAN achieves accurate DHR robust to lacking visibility of actors, and outperforms past methods by 3.04 mAP on DHR-WebImages verb task.

Similar content being viewed by others

Data availability statement

The datasets generated during and analysed during the current study are available in the github repository, https://github.com/kaenkogashi/DHR-WebImages.

References

Feichtenhofer C, Fan H, Malik J, He K (2019) Slowfast networks for video recognition. In: ICCV, pp 6202–6211

Yan S, **ong Y, Lin D (2018) Spatial temporal graph convolutional networks for skeleton-based action recognition. In: AAAI

Stergiou A, Poppe R (2019) Analyzing human human interactions: a survey. CVIU 188:102799. https://doi.org/10.1016/j.cviu.2019.102799

Caba Heilbron F, Escorcia V, Ghanem B, Carlos Niebles J (2015) Activitynet: a large-scale video benchmark for human activity understanding. In: CVPR, pp 961–970. https://doi.org/10.1109/CVPR.2015.7298698

Zhang, Z, Ma, X, Song, R, Rong, X, Tian, X, Tian, G, Li, Y: Deep learning based human action recognition: a survey. In: Chinese automation congress (CAC), pp 3780–3785 (2017). https://doi.org/10.1109CAC.2017.8243438

Gupta A, Gupta K, Gupta K, Gupta K (2020) A survey on human activity recognition and classification. In: ICCSP, pp 0915–0919. https://doi.org/10.1109/ICCSP48568.2020.9182416

Birdwhistell RL (1952) Introduction to Kinesics: an annotation system for analysis of body motion and gesture. Foreign Service Institute, Department of State

Poppe R (2017) In: Burgoon JK, Magnenat-Thalmann N, Pantic M, Vinciarelli A (eds) Automatic analysis of bodily social signals. Cambridge University Press., pp 155–167

Palmer SE (1975) Visual perception and world knowledge: notes on a model of sensory-cognitive interaction. Explorations in Cognition 279–307

Gupta S, Malik J (2015) Visual semantic role labeling. ar**v:1505.04474

Chao Y-W, Liu Y, Liu X, Zeng H, Deng J (2018) Learning to detect human-object interactions. In: WACV, pp 381–389

Kogashi K, Nobuhara S, Nishino K (2022) Dyadic human relation recognition. In: The IEEE International conference on multimedia and expo (ICME)

Chen S, Li Z, Tang Z (2020) Relation r-cnn: a graph based relation-aware network for object detection. IEEE Signal Process Lett 27:1680–1684. https://doi.org/10.1109/LSP.2020.3025128

Quan Y, Li Z, Chen S, Zhang C, Ma H (2021) Joint deep separable convolution network and border regression reinforcement for object detection. Neural Comput Appl 33(9):4299–4314

Ren S, He K, Girshick R, Sun J (2017) Faster r-cnn: towards real-time object detection with region proposal networks. IEEE TPAMI 39(6):1137–1149

Redmon J, Divvala S, Girshick R, Farhadi A (2016) You only look once: unified, real-time object detection. In: 2016 IEEE conference on computer vision and pattern recognition (CVPR), pp 779–788. https://doi.org/10.1109/CVPR.2016.91

Carion N, Massa F, Synnaeve G, Nicolas Usunier AK, Zagoruyko S (2020) End-to-end object detection with transformers. https://github.com/facebookresearch/detr

Gao C, Zou Y, Huang J-B (2018) ican: instance-centric attention network for human-object interaction detection. In: BMVC

Wan B, Zhou D, Liu Y, Li R, He X (2019) Pose-aware multi-level feature network for human object interaction detection. In: ICCV, pp 9468–9477. https://doi.org/10.1109/ICCV.2019.00956

Ulutan O, Iftekhar ASM, Manjunath BS (2020) Vsgnet: spatial attention network for detecting human object interactions using graph convolutions. In: CVPR, pp 13614–13623. https://doi.org/10.1109/CVPR42600.2020.01363

Li Y-L, Zhou S, Huang X, Xu L, Ma Z, Fang H-S, Wang Y, Lu C (2019) Transferable interactiveness knowledge for human-object interaction detection. In: CVPR, pp 3580–3589

Li Y-L, Liu X, Lu H, Wang S, Liu J, Li J, Lu C (2020) Detailed 2d-3d joint representation for human-object interaction. In: CVPR, pp 10163–10172

Liao Y, Liu S, Wang F, Chen Y, Qian C, Feng J (2020) Ppdm: parallel point detection and matching for real-time human-object interaction detection. In: CVPR, pp 479–487

Xu B, Wong Y, Li J, Zhao Q, Kankanhalli MS (2019) Learning to detect human-object interactions with knowledge. In: CVPR

Gao C, Xu J, Zou Y, Huang J-B (2020) Drg: dual relation graph for human-object interaction detection. In: European conference on computer vision

Li Y, Liu X, Wu X, Li Y, Lu C (2020) HOI Analysis: integrating and decomposing human-object interaction. ar**v:2010.16219

Kim B, Choi T, Kang J, Kim HJ (2020) Uniondet: union-level detector towards real-time human-object interaction detection. In: ECCV, pp 498–514

Liu Y, Chen Q, Zisserman A (2020) Amplifying key cues for human-object-interaction detection. In: ECCV

Qi S, Wang W, Jia B, Shen J, Zhu S-C (2018) Learning human-object interactions by graph parsing neural networks. In: ECCV

Wang S, Yap K-H, Yuan J, Tan Y-P (2020) Discovering human interactions with novel objects via zero-shot learning. In: CVPR

Hou Z, Yu B, Qiao Y, Peng X, Tao D (2021) Detecting human-object interaction via fabricated compositional learning. In: CVPR

Peyre J, Laptev I, Schmid C, Sivic J (2019) Detecting unseen visual relations using analogies. In: ICCV

Liu Y, Yuan J, Chen CW (2020) Consnet: learning consistency graph for zero-shot human-object interaction detection. In: ACM MM, pp 4235–4243

Zou C, Wang B, Hu Y, Liu J, Wu Q, Zhao Y, Li B, Zhang C, Zhang C, Wei Y, Sun J (2021) End-to-end human object interaction detection with hoi transformer. In: CVPR

Kim B, Lee J, Kang J, Kim E-S, Kim HJ (2021) Hotr: end-to-end human-object interaction detection with transformers. In: CVPR

Tamura M, Ohashi H, Yoshinaga T (2021) QPIC: query-based pairwise human-object interaction detection with image-wide contextual information. In: CVPR

Li K, Wang S, Zhang X, Xu Y, Xu W, Tu Z (2021) Pose recognition with cascade transformers. In: Proceedings of CVPR, pp 1944–1953

Miech A, Alayrac J-B, Laptev I, Sivic J, Zisserman A (2021) Thinking fast and slow: efficient text-to-visual retrieval with transformers. In: Proceedings of CVPR, pp 9826–9836

Wang H, Zhu Y, Adam H, Yuille A, Chen L-C (2021) Max-deeplab: end-to-end panoptic segmentation with mask transformers. In: Proceedings of CVPR, pp 5463–5474

Ryoo MS, Aggarwal JK (2010) UT-Interaction Dataset, ICPR contest on Semantic Description of Human Activities (SDHA)

Patron A, Marszalek M, Zisserman A, Reid I (2010) High five: recognising human interactions in tv shows. In: BMVC, pp 50–15011. https://doi.org/10.5244/C.24.50

Marszalek M, Laptev I, Schmid C (2009) Actions in context. In: CVPR

van Gemeren C, Tan RT, Poppe R, Veltkamp RC (2014) Dyadic interaction detection from pose and flow. In: European conference on computer vision (ECCV)

Yun K, Honorio J, Chattopadhyay D, Berg TL, Samaras D (2012) Two-person interaction detection using body-pose features and multiple instance learning. In: 2012 IEEE computer society conference on computer vision and pattern recognition workshops (CVPRW), IEEE

Joo H, Simon T, Li X, Liu H, Tan L, Gui L, Banerjee S, Godisart TS, Nabbe B, Matthews I, Kanade T, Nobuhara S, Sheikh Y (2017) Panoptic studio: a massively multiview system for social interaction capture. IEEE Transactions on pattern analysis and machine intelligence

Ricci E, Varadarajan J, Subramanian R, Bulò SR, Ahuja N, Lanz O (2015) Uncovering interactions and interactors: joint estimation of head, body orientation and f-formations from surveillance videos. In: 2015 ICCV, pp 4660–4668. https://doi.org/10.1109/ICCV.2015.529

Smaira L, Carreira J, Noland E, Clancy E, Wu A, Zisserman A (2020) A short note on the kinetics-700-2020 human action dataset. ar**v:2010.10864

Monfort M, Andonian A, Zhou B, Ramakrishnan K, Bargal SA, Yan T, Brown L, Fan Q, Gutfruend D, Vondrick C et al (2019) Moments in time dataset: one million videos for event understanding. IEEE Transactions on Pattern Analysis and Machine Intelligence 1–8. https://doi.org/10.1109/TPAMI.2019.2901464

Zhao H, Yan Z, Torresani L, Torralba A (2019) HACS: human action clips and segments dataset for recognition and temporal localization. ar**v:1712.09374

Gu C, Sun C, Ross DA, Vondrick C, Pantofaru C, Li Y, Vijayanarasimhan S, Toderici G, Ricco S, Sukthankar R, Schmid C, Malik J (2018) Ava: a video dataset of spatio-temporally localized atomic visual actions. In: CVPR, pp 6047–6056. https://doi.org/10.1109/CVPR.2018.00633

Wang L, Tong Z, Ji B, Wu G (2020) Tdn: temporal difference networks for efficient action recognition. ar**v:2012.10071

Alahi A, Goel K, Ramanathan V, Robicquet A, Fei-Fei L, Savarese S (2016) Social lstm: human trajectory prediction in crowded spaces. In: CVPR, pp 961–971. https://doi.org/10.1109/CVPR.2016.110

Fan L, Wang W, Zhu S-C, Tang X, Huang S (2019) Understanding human gaze communication by spatio-temporal graph reasoning. In: ICCV, pp 5723–5732. https://doi.org/10.1109/ICCV.2019.00582

Sun Q, Schiele B, Fritz M (2017) A domain based approach to social relation recognition. In: CVPR, pp 21–26

Ibrahim, MS, Muralidharan S, Deng Z, Vahdat A, Mori G (2016) A hierarchical deep temporal model for group activity recognition. In: CVPR, pp 1971–1980. https://doi.org/10.1109/CVPR.2016.217

Wu J, Wang L, Wang L, Guo J, Wu G (2019) Learning actor relation graphs for group activity recognition. In: CVPR, pp 9956–9966. https://doi.org/10.1109/CVPR.2019.01020

Shu T, Todorovic S, Zhu S-C (2017) Cern: confidence-energy recurrent network for group activity recognition. In: CVPR

Curto D, Clapés A, Selva J, Smeureanu S, Junior JCSJ, Gallardo-Pujol D, Guilera G, Leiva D, Moeslund TB, Escalera S, Palmero C (2021) Dyadformer: a multi-modal transformer for long-range modeling of dyadic interactions. In: Proceedings of ICCV Workshops, pp 2177–2188

Shu T, Gao X, Ryoo MS, Zhu S-C (2017) Learning social affordance grammar from videos: transferring human interactions to human-robot interactions. In: ICRA, pp 1669–1676

Miller GA (1995) Wordnet: a lexical database for english. In: Communications of the ACM vol 38, no 11: 39-41, pp 5463–5474

7ESL: 7 Steps to Learn English. https://7esl.com/english-verbs/

Wu Y, Kirillov A, Massa F, Lo W-Y, Girshick R (2019) Detectron2. https://github.com/facebookresearch/detectron2

He K, Gkioxari G, Dollár P, Girshick R (2017) Mask r-cnn. In: ICCV, pp 2980–2988. https://doi.org/10.1109/ICCV.2017.322

Cao Z, Hidalgo G, Simon T, Wei S-E, Sheikh Y (2019) Openpose: realtime multi-person 2d pose estimation using part affinity fields. IEEE TPAMI 43(1):172–186

Lin T-Y, Maire M, Belongie S, Bourdev L, Girshick R, Hays J, Perona P, Ramanan D, Zitnick CL, Dollár P (2014) Microsoft coco: common objects in context. In: ECCV

Akiba T, Sano S, Yanase T, Ohta T, Koyama M (2019) Optuna: a next-generation hyperparameter optimization framework. In: KDD, pp 2623–2631

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: CVPR, pp 770–778. https://doi.org/10.1109/CVPR.2016.90

Lin T-Y, Dollár P, Ross Girshick KH, Hariharan B, Belongie S (2017) Feature pyramid networks for object detection. In: CVPR

Acknowledgements

This work was in part supported by JSPS 20H05951, JSPS 21H04893, and JST JPMJCR20G7.

Funding

Partial financial support was received from JSPS 20H05951, JSPS 21H04893, and JST JPMJCR20G7.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Kogashi, K., Nobuhara, S. & Nishino, K. SAN: Structure-aware attention network for dyadic human relation recognition in images. Multimed Tools Appl 83, 46947–46966 (2024). https://doi.org/10.1007/s11042-023-17229-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-17229-1